Build a multi-tenant configuration system with tagged storage patterns

Two gaps emerge as organizations scale: handling tenant metadata that changes faster than cache TTL allows, and scaling the metadata service itself without creating a performance bottleneck… Traditional caching strategies force an uncomfortable trade-off: either accept stale tenant context (risk…

In modern microservices architectures, configuration management remains one of the most challenging operational concerns. Two gaps emerge as organizations scale: handling tenant metadata that changes faster than cache TTL allows, and scaling the metadata service itself without creating a performance bottleneck.

Traditional caching strategies force an uncomfortable trade-off: either accept stale tenant context (risking incorrect data isolation or feature flags), or implement aggressive cache invalidation that sacrifices performance and increases load on your metadata service. When tenant counts grow into the hundreds or thousands, this metadata service itself becomes a scaling challenge, particularly when different configuration types have vastly different access patterns.

The challenge intensifies when you need to support different storage backends for different configuration types. Some require high-frequency access patterns suited for Amazon DynamoDB, while others benefit from the hierarchical organization and built-in versioning of AWS Systems Manager Parameter Store. Traditional solutions often force engineering teams into a corner: either build multiple configuration services (increasing operational overhead), or compromise on performance by using a single storage backend that isn’t optimized for every use case.

In this post, we demonstrate how you can build a scalable, multi-tenant configuration service using the tagged storage pattern, an architectural approach that uses key prefixes (like tenant_config_ or param_config_) to automatically route configuration requests to the most appropriate AWS storage service. This pattern maintains strict tenant isolation and supports real-time, zero-downtime configuration updates through event-driven architecture, alleviating the cache staleness problem.

What you’ll learn:

- Implementing a multi-tenant data model with DynamoDB and Parameter Store

- Using the Strategy pattern for flexible storage backend switching

- Building tenant isolation through JSON Web Token (JWT) claims

- Creating an event-driven auto-refresh mechanism with Amazon EventBridge and AWS Lambda

- Implementing zero-downtime configuration updates with gRPC (a high-performance communication protocol) streaming

- Addressing the cache TTL problem for rapidly-changing tenant metadata

By the end of this post, you’ll understand how to architect a configuration service that handles complex multi-tenant requirements while optimizing for both performance and operational simplicity.

Solution overview

The architecture uses four AWS services orchestrated through a NestJS-based gRPC service to create a reliable, event-driven configuration management system. Let’s first understand the overall architecture before diving into each component’s implementation details.

Architecture components

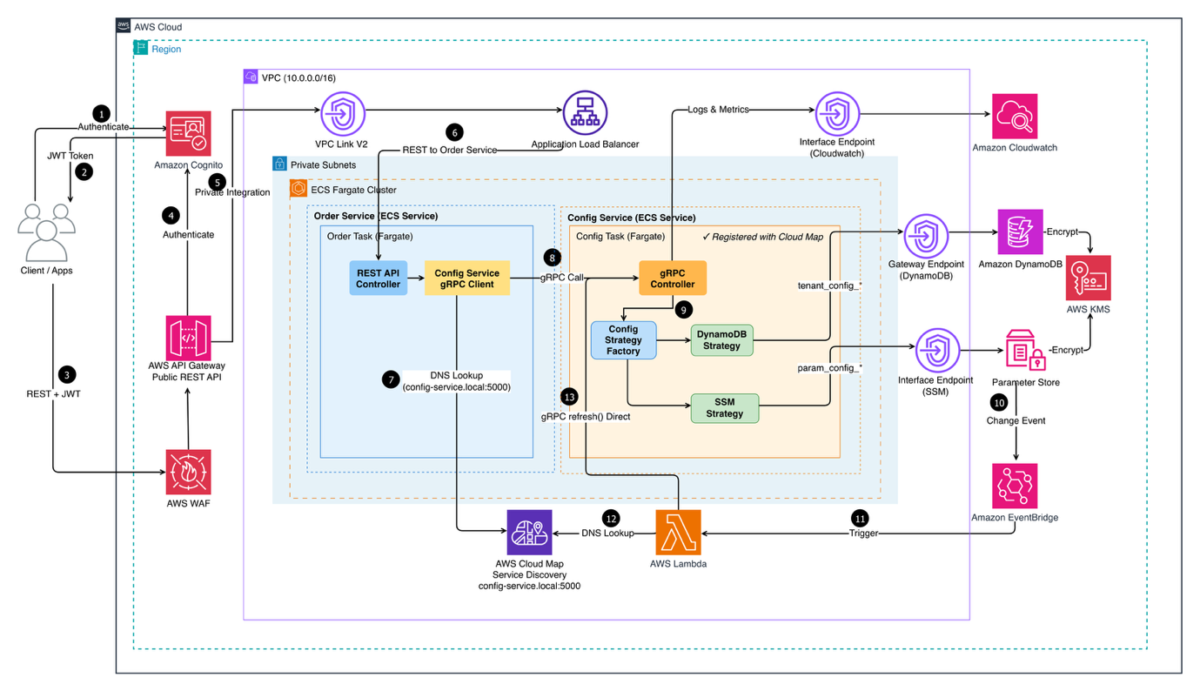

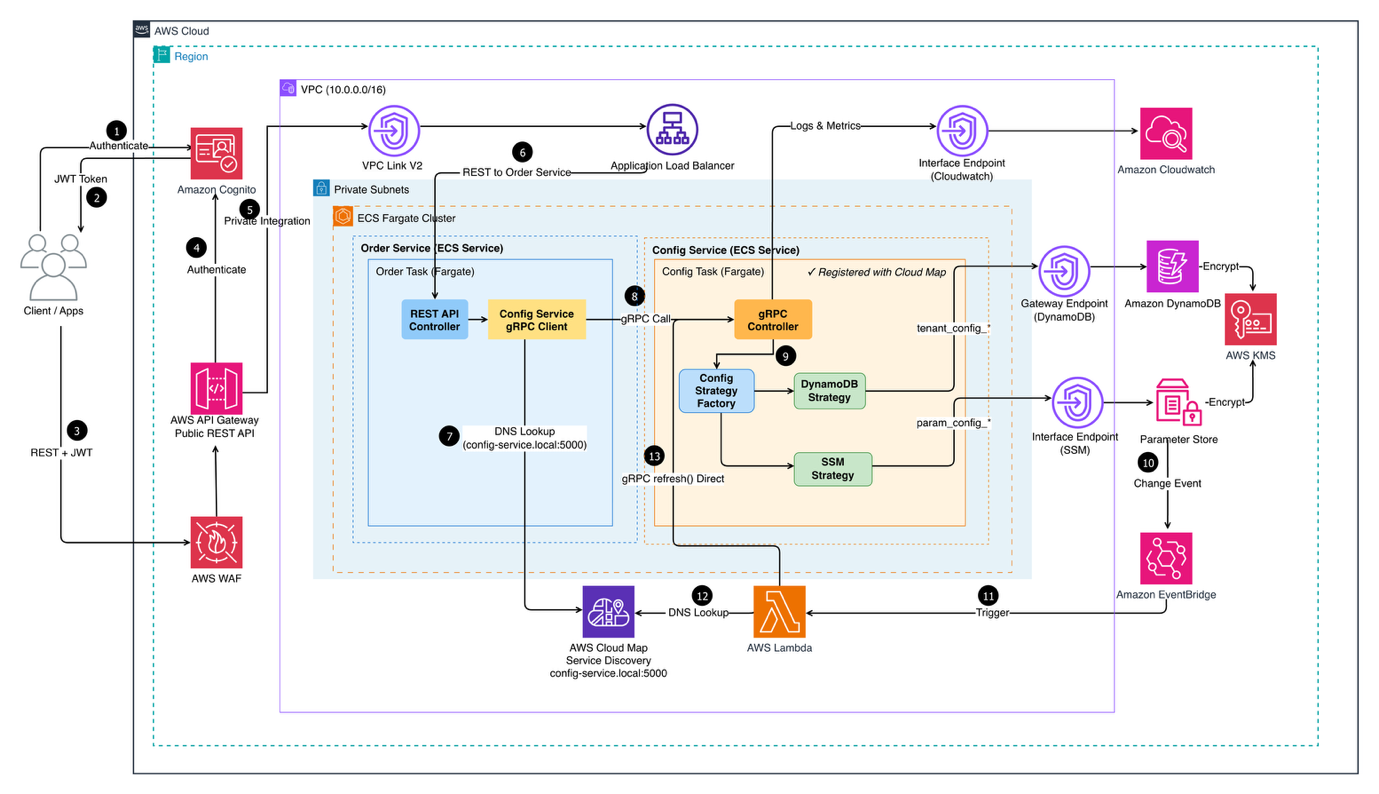

The following diagram shows the end-to-end architecture of the Multi-Tenant Configuration Service deployed on AWS, from how client requests enter the system to how configuration data is retrieved from the right storage backend.

Figure 1: Multi-Tenant Configuration Service Architecture

Client applications authenticate via Amazon Cognito and pass through AWS WAF before reaching Amazon API Gateway. Traffic is then routed through a VPC Link to an Application Load Balancer, which distributes requests across two core microservices running on Amazon Elastic Container Service (Amazon ECS) on AWS Fargate within private subnets :

- Order Service— handles incoming REST requests and delegates configuration lookups to the Config Service via gRPC

- Config Service— exposes a gRPC API and uses a Config Strategy Factory to dynamically select the appropriate storage backend (DynamoDB or Parameter Store) based on the request

Service discovery is managed by AWS Cloud Map, while Amazon CloudWatch centralizes logs and metrics across services.

The system is organized into four interconnected layers, each addressing a specific aspect of the configuration management challenge:

1. Storage layer – multi-backend strategy

The storage layer strategically uses two complementary AWS services, each optimized for different configuration access patterns and requirements.

- Amazon DynamoDB: Stores tenant-specific configurations. These are settings unique to each customer, such as payment gateway preferences or feature flags. With single-digit millisecond latency, DynamoDB handles high-frequency reads efficiently. The schema uses composite keys (

TENANT#{tenantId}as partition key,CONFIG#{configType}as sort key) for efficient tenant-scoped queries and built-in multi-tenant isolation at the data model level. - AWS Systems Manager Parameter Store: manages shared parameters. These are configuration values used across multiple services or tenants, such as API endpoints, database connection strings, and region-specific settings. Unlike tenant-specific configs that change frequently, these parameters are relatively static but benefit from hierarchical organization. The path structure (

/config-service/{tenantId}/{service}/{parameter}) enables bulk retrieval operations, reducing the number of API calls needed during service initialization from dozens to a single request.

2. Service layer – gRPC with strategy pattern

A NestJS-based microservice implements the configuration retrieval logic using gRPC for high-performance, type-safe communication. This choice significantly reduces network bandwidth and improves response times for service-to-service communication where compatibility with web browsers isn’t a requirement.

At the core is a Strategy Pattern implementation that determines the optimal storage backend based on configuration key prefixes. This pattern simplifies the addition of new storage backends (like Amazon Simple Storage Service (Amazon S3) for large configuration files) without modifying the core service logic.

3. Authentication layer – Amazon Cognito

User authentication flows through Amazon Cognito with custom attributes:

custom:tenantId(immutable) – Tenant identifier embedded in JWTcustom:role(mutable) – User role for authorization

Critical security design: The service never accepts tenantId from request parameters. Instead, it extracts the tenant context from validated JWT tokens, making sure requests cannot access other tenants’ data even if they attempt to manipulate request payloads.

4. Event-driven refresh layer

Traditional configuration updates present a dilemma: how do you keep services synchronized without compromising performance or causing downtime?

Polling approaches continuously check for changes, generating unnecessary API calls that cost money even when nothing changes. They also introduce delays. Services don’t see updates until the next poll cycle, which could be seconds or minutes later.

Service restart approaches cause downtime, drop active connections, and disrupt user sessions. For SaaS applications serving customers 24/7, restart-based updates are unacceptable.

The event-driven refresh layer addresses both problems by implementing a reactive architecture where Amazon EventBridge monitors Parameter Store for changes and triggers AWS Lambda to update the service’s local cache. This achieves configuration updates within seconds while users experience no interruption.

Technical implementation

The following sections detail the implementation, starting with the data model, which serves as the backbone for tenant isolation and efficient querying.

A. Multi-tenant data model

The foundation of tenant isolation begins with the data model. Using DynamoDB’s composite key structure, we achieve both tenant isolation and efficient querying without requiring separate tables per tenant.

DynamoDB schema design:

The following example shows a tenant-specific configuration stored in DynamoDB, illustrating how composite keys enable both isolation and efficient access:

Author: Koshal Agrawal