Cepsa Química improves the efficiency and accuracy of product stewardship using Amazon Bedrock

The Product Stewardship department is responsible for managing a large collection of regulatory compliance documents… Example questions might be “What are the restrictions for CMR substances?”, “How long do I need to keep the documents related to a toluene sale?”, or “What is the reach …

This is a guest post co-written with Vicente Cruz Mínguez, Head of Data and Advanced Analytics at Cepsa Química, and Marcos Fernández Díaz, Senior Data Scientist at Keepler.

Generative artificial intelligence (AI) is rapidly emerging as a transformative force, poised to disrupt and reshape businesses of all sizes and across industries. Generative AI empowers organizations to combine their data with the power of machine learning (ML) algorithms to generate human-like content, streamline processes, and unlock innovation. As with all other industries, the energy sector is impacted by the generative AI paradigm shift, unlocking opportunities for innovation and efficiency. One of the areas where generative AI is rapidly showing its value is the streamlining of operational processes, reducing costs, and enhancing overall productivity.

In this post, we explain how Cepsa Química and partner Keepler have implemented a generative AI assistant to increase the efficiency of the product stewardship team when answering compliance queries related to the chemical products they market. To accelerate development, they used Amazon Bedrock, a fully managed service that offers a choice of high-performing foundation models (FMs) from leading AI companies like AI21 Labs, Anthropic, Cohere, Meta, Stability AI, and Amazon through a single API, along with a broad set of capabilities to build generative AI applications with security, privacy and safety.

Cepsa Química, a world leader in the manufacturing of linear alkylbenzene (LAB) and ranking second in the production of phenol, is a company aligned with Cepsa’s Positive Motion strategy for 2030, contributing to the decarbonization and sustainability of its processes through the use of renewable raw materials, development of products with less carbon, and use of waste as raw materials.

At Cepsa’s Digital, IT, Transformation & Operational Excellence (DITEX) department, we work on democratizing the use of AI within our business areas so that it becomes another lever for generating value. Within this context, we identified product stewardship as one of the areas with more potential for value creation through generative AI. We partnered with Keepler, a cloud-centered data services consulting company specialized in the design, construction, deployment, and operation of advanced public cloud analytics custom-made solutions for large organizations, in the creation of the first generative AI solution for one of our corporate teams.

The Safety, Sustainability & Energy Transition team

The Safety, Sustainability & Energy Transition area of Cepsa Química is responsible for all human health, safety, and environmental aspects related to the products manufactured by the company and the associated raw materials, among others. In this field, its areas of action are product safety, regulatory compliance, sustainability, and customer service around safety and compliance.

One of the responsibilities of the Safety, Sustainability & Energy Transition team is product stewardship, which takes care of regulatory compliance of the marketed products. The Product Stewardship department is responsible for managing a large collection of regulatory compliance documents. Their duty involves determining which regulations apply to each specific product in the company’s portfolio, compiling a list of all the applicable regulations for a given product, and supporting other internal teams that might have questions related to these products and regulations. Example questions might be “What are the restrictions for CMR substances?”, “How long do I need to keep the documents related to a toluene sale?”, or “What is the reach characterization ratio and how do I calculate it?” The regulatory content required to answer these questions varies over time, introducing new clauses and repealing others. This work used to consume a significant percentage of the team’s time, so they identified an opportunity to generate value by reducing the search time for regulatory consultations.

The DITEX department engaged with the Safety, Sustainability & Energy Transition team for a preliminary analysis of their pain points and deemed it feasible to use generative AI techniques to speed up the resolution of compliance queries faster. The analysis was conducted for queries based on both unstructured (regulatory documents and product specs sheets) and structured (product catalog) data.

An approach to product stewardship with generative AI

Large language models (LLMs) are trained with vast amounts of information crawled from the internet, capturing considerable knowledge from multiple domains. However, their knowledge is static and tied to the data used during the pre-training phase.

To overcome this limitation and provide dynamism and adaptability to knowledge base changes, we decided to follow a Retrieval Augmented Generation (RAG) approach, in which the LLMs are presented with relevant information extracted from external data sources to provide up-to-date data without the need to retrain the models. This approach is a great fit for a scenario where regulatory information is updated at a fast pace, with frequent derogations, amendments, and new regulations being published.

Additionally, the RAG-based approach enables rapid prototyping of document search use cases, allowing us to craft a solution based on regulatory information about chemical substances in a few weeks.

The solution we built is based on four main functional blocks:

- Input processing – Input regulatory PDF documents are preprocessed to extract the relevant information. Each document is divided into chunks to ease the indexing and retrieval processes based on semantic meaning.

- Embeddings generation – An embeddings model is used to encode the semantic information of each chunk into an embeddings vector, which is stored in a vector database, enabling similarity search of user queries.

- LLM chain service – This service orchestrates the solution by invoking the LLM models with a fitting prompt and creating the response that is returned to the user.

- User interface – A conversational chatbot enables interaction with users.

We divided the solution into two independent modules: one to batch process input documents and another one to answer user queries by running inference.

Batch ingestion module

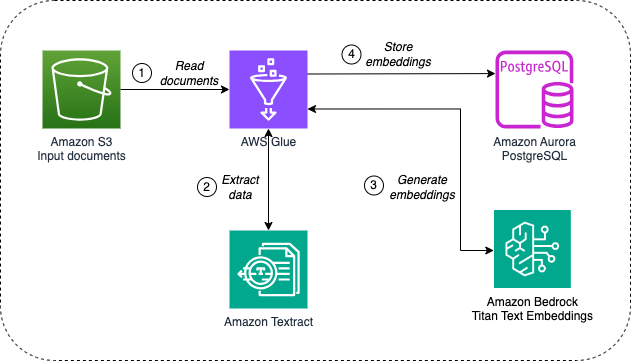

The batch ingestion module performs the initial processing of the raw compliance documents and product catalog and generates the embeddings that will be later used to answer user queries. The following diagram illustrates this architecture.

The batch ingestion module performs the following tasks:

- AWS Glue, a serverless data integration service, is used to run periodical extract, transform, and load (ETL) jobs that read input raw documents and the product catalog from Amazon Simple Storage Service (Amazon S3), an object storage service that offers industry-leading scalability, data availability, security, and performance.

- The AWS Glue job calls Amazon Textract, an ML service that automatically extracts text, handwriting, layout elements, and data from scanned documents, to process the input PDF documents. After data is extracted, the job performs document chunking, data cleanup, and postprocessing.

- The AWS Glue job uses Amazon Bedrock to generate vector embeddings for each document chunk using the Amazon Titan Text Embeddings

- Amazon Aurora PostgreSQL-Compatible Edition, a fully managed, PostgreSQL-compatible, and ACID-compliant relational database engine to store the extracted embeddings, is used with the pgvector extension enabled for efficient similarity searches.

Inference module

The inference module transforms user queries into embeddings, retrieves relevant document chunks from the knowledge base using similarity search, and prompts an LLM with the query and retrieved chunks to generate a contextual response. The following diagram illustrates this architecture.

The inference module implements the following steps:

- Users interact through a web portal, which consists of a static website stored in Amazon S3, served through Amazon CloudFront, a content delivery network (CDN), and secured with AWS Cognito, a customer identity and access management platform.

- Queries are sent to the backend using a REST API defined in Amazon API Gateway, a fully managed service that makes it straightforward for developers to create, publish, maintain, monitor, and secure APIs at any scale, and implemented through an API Gateway private integration. The backend is implemented by an LLM chain service running on AWS Fargate, a serverless, pay-as-you-go compute engine that lets you focus on building applications without managing servers. This service orchestrates the interaction with the different LLMs using the LangChain

- The LLM chain service invokes Amazon Titan Text Embeddings on Amazon Bedrock to generate the embeddings for the user query.

- Based on the query embeddings, the relevant documents are retrieved from the embeddings database using similarity search.

- The service composes a prompt that includes the user query and the documents extracted from the knowledge base. The prompt is sent to Anthropic Claude 2.0 on Amazon Bedrock, and the model answer is sent back to the user.

Note on the RAG implementation

The product stewardship chatbot was built before Knowledge Bases for Amazon Bedrock was generally available. Knowledge Bases for Amazon Bedrock is a fully managed capability that helps you implement the entire RAG workflow from ingestion to retrieval and prompt augmentation without having to build custom integrations to data sources and manage data flows. Knowledge Bases manages the initial vector store set up, handles the embedding and querying, and provides source attribution and short-term memory needed for production RAG applications.

With Knowledge Bases for Amazon Bedrock, the implementation of steps 3–4 of the Batch Ingestion and Inference modules can be significantly simplified.

Challenges and solutions

In this section, we discuss the challenges we encountered during the development of the system and the decisions we made to overcome those challenges.

Data preprocessing and chunking strategy

We discovered that the input documents contained a variety of structural complexities, which posed a challenge in the processing stage. For instance, some tables contain large amounts of information with minimal context except for the header, which is displayed at the top of the table. This can make it complex to obtain the right answers to user queries, because the retrieval process might lack context.

Additionally, some document annexes are linked to other sections of the document or even other documents, leading to incomplete data retrieval and generation of inaccurate answers.

To address these challenges, we implemented three mitigation strategies:

- Data chunking – We decided to use larger chunk sizes with significant overlaps to provide maximum context for each chunk during ingestion. However, we set an upper limit to avoid losing the semantic meaning of the chunk.

- Model selection – We selected a model with a large context window to generate responses that take a larger context into account. Anthropic Claude 2.0 on Amazon Bedrock, with a 100 K context window, provided the most accurate results. (The system was built before Anthropic Claude 2.1 or the Anthropic Claude 3 model family were available on Amazon Bedrock).

- Query variants – Prior to retrieving documents from the database, multiple variants of the user query are generated using an LLM. Documents for all variants are retrieved and deduplicated before being provided as context for the LLM query.

These three strategies significantly enhanced the retrieval and response accuracy of the RAG system.

Evaluation of results and process refinement

Evaluating the responses from the LLM models is another challenge that is not found in traditional AI use cases. Because of the free text nature of the output, it’s difficult to assess and compare different responses in terms of a metric or KPI, leading to a manual review in most cases. However, a manual process is time-consuming and not scalable.

To minimize the drawbacks, we created a benchmarking dataset with the help of seasoned users, containing the following information:

- Representative questions that require data combined from different documents

- Ground truth answers for each question

- References to the source documents, pages, and line numbers where the right answers are found

Then we implemented an automatic evaluation system with Anthropic Claude 2.0 on Amazon Bedrock, with different prompting strategies to evaluate document retrieval and response formation. This approach allowed for adjustment of different parameters in a fast and automated manner:

- Preprocessing – Tried different values for chunk size and overlap size

- Retrieval – Tested several retrieval techniques of incremental complexity

- Querying – Ran the tests with different LLMs hosted on Amazon Bedrock:

- Amazon Titan Text Premier

- Cohere Command v1.4

- Anthropic Claude Instant

- Anthropic Claude 2.0

The final solution consists of three chains: one for translating the user query into English, one for generating variations of the input question, and one for composing the final response.

Achieved improvements and next steps

We built a conversational interface for the Safety, Sustainability & Energy Transition team that helps the product stewardship team be more efficient and obtain answers to compliance queries faster. Furthermore, the answers contain references to the input documents used by the LLM to generate the reply, so the team can double-check the response and find additional context if it’s needed. The following screenshot shows an example of the conversational interface.

Some of the qualitative and quantitative improvements identified by the product stewardship team through the use of the solution are:

- Query times – The following table summarizes the search time saved by query complexity and user seniority (considering all search times have been reduced to less than 1 minute).

Complexity | Time saved (minutes) | |

| Junior user | Senior user | |

| Low | 3.3 | 2 |

| Medium | 9.25 | 4 |

| High | 28 | 10 |

- Answer quality – The implemented system offers additional context and document references that are used by the users to improve the quality of the answer.

- Operational efficiency – The implemented system has accelerated the regulatory query process, directly enhancing the department operational efficiency.

From the DITEX department, we’re currently working with other business areas at Cepsa Química to identify similar use cases to help create a corporate-wide tool that reuses components from this first initiative and generalizes the use of generative AI across business functions.

Conclusion

In this post, we shared how Cepsa Química and partner Keepler have implemented a generative AI assistant that uses Amazon Bedrock and RAG techniques to process, store, and query the corpus of knowledge related to product stewardship. As a result, users save up to 25 percent of their time when they use the assistant to solve compliance queries.

If you want your business to get started with generative AI, visit Generative AI on AWS and connect with a specialist, or quickly build a generative AI application in PartyRock.

About the authors

Vicente Cruz Mínguez is the Head of Data & Advanced Analytics at Cepsa Química. He has more than 8 years of experience with big data and machine learning projects in financial, retail, energy, and chemical industries. He is currently leading the Data, Advanced Analytics & Cloud Development team in the Digital, IT, Transformation & Operational Excellence department at Cepsa Química, with a focus in feeding the corporate data lake and democratizing data for analysis, machine learning projects, and business analytics. Since 2023, he has also been working on scaling the use of generative AI in all departments.

Vicente Cruz Mínguez is the Head of Data & Advanced Analytics at Cepsa Química. He has more than 8 years of experience with big data and machine learning projects in financial, retail, energy, and chemical industries. He is currently leading the Data, Advanced Analytics & Cloud Development team in the Digital, IT, Transformation & Operational Excellence department at Cepsa Química, with a focus in feeding the corporate data lake and democratizing data for analysis, machine learning projects, and business analytics. Since 2023, he has also been working on scaling the use of generative AI in all departments.

Marcos Fernández Díaz is a Senior Data Scientist at Keepler, with 10 years of experience developing end-to-end machine learning solutions for different clients and domains, including predictive maintenance, time series forecasting, image classification, object detection, industrial process optimization, and federated machine learning. His main interests include natural language processing and generative AI. Outside of work, he is a travel enthusiast.

Marcos Fernández Díaz is a Senior Data Scientist at Keepler, with 10 years of experience developing end-to-end machine learning solutions for different clients and domains, including predictive maintenance, time series forecasting, image classification, object detection, industrial process optimization, and federated machine learning. His main interests include natural language processing and generative AI. Outside of work, he is a travel enthusiast.

Guillermo Menéndez Corral is a Sr. Manager, Solutions Architecture at AWS for Energy and Utilities. He has over 18 years of experience designing and building software products and currently helps AWS customers in the energy industry harness the power of the cloud through innovation and modernization.

Guillermo Menéndez Corral is a Sr. Manager, Solutions Architecture at AWS for Energy and Utilities. He has over 18 years of experience designing and building software products and currently helps AWS customers in the energy industry harness the power of the cloud through innovation and modernization.

Author: Vicente Cruz Mínguez