How Getir reduced model training durations by 90% with Amazon SageMaker and AWS Batch

In this post, we explain how we built an end-to-end product category prediction pipeline to help commercial teams by using Amazon SageMaker and AWS Batch, reducing model training duration by 90%… A model that generates a comprehensive category tree allows our commercial teams to benchmark our ex…

This is a guest post co-authored by Nafi Ahmet Turgut, Hasan Burak Yel, and Damla Şentürk from Getir.

Established in 2015, Getir has positioned itself as the trailblazer in the sphere of ultrafast grocery delivery. This innovative tech company has revolutionized the last-mile delivery segment with its compelling offering of “groceries in minutes.” With a presence across Turkey, the UK, the Netherlands, Germany, and the United States, Getir has become a multinational force to be reckoned with. Today, the Getir brand represents a diversified conglomerate encompassing nine different verticals, all working synergistically under a singular umbrella.

In this post, we explain how we built an end-to-end product category prediction pipeline to help commercial teams by using Amazon SageMaker and AWS Batch, reducing model training duration by 90%.

Understanding our existing product assortment in a detailed manner is a crucial challenge that we, along with many businesses, face in today’s fast-paced and competitive market. An effective solution to this problem is the prediction of product categories. A model that generates a comprehensive category tree allows our commercial teams to benchmark our existing product portfolio against that of our competitors, offering a strategic advantage. Therefore, our central challenge is the creation and implementation of an accurate product category prediction model.

We capitalized on the powerful tools provided by AWS to tackle this challenge and effectively navigate the complex field of machine learning (ML) and predictive analytics. Our efforts led to the successful creation of an end-to-end product category prediction pipeline, which combines the strengths of SageMaker and AWS Batch.

This capability of predictive analytics, particularly the accurate forecast of product categories, has proven invaluable. It provided our teams with critical data-driven insights that optimized inventory management, enhanced customer interactions, and strengthened our market presence.

The methodology we explain in this post ranges from the initial phase of feature set gathering to the final implementation of the prediction pipeline. An important aspect of our strategy has been the use of SageMaker and AWS Batch to refine pre-trained BERT models for seven different languages. Additionally, our seamless integration with AWS’s object storage service Amazon Simple Storage Service (Amazon S3) has been key to efficiently storing and accessing these refined models.

SageMaker is a fully managed ML service. With SageMaker, data scientists and developers can quickly and effortlessly build and train ML models, and then directly deploy them into a production-ready hosted environment.

As a fully managed service, AWS Batch helps you run batch computing workloads of any scale. AWS Batch automatically provisions compute resources and optimizes the workload distribution based on the quantity and scale of the workloads. With AWS Batch, there’s no need to install or manage batch computing software, so you can focus your time on analyzing results and solving problems. We used GPU jobs that help us run jobs that use an instance’s GPUs.

Overview of solution

Five people from Getir’s data science team and infrastructure team worked together on this project. The project was completed in a month and deployed to production after a week of testing.

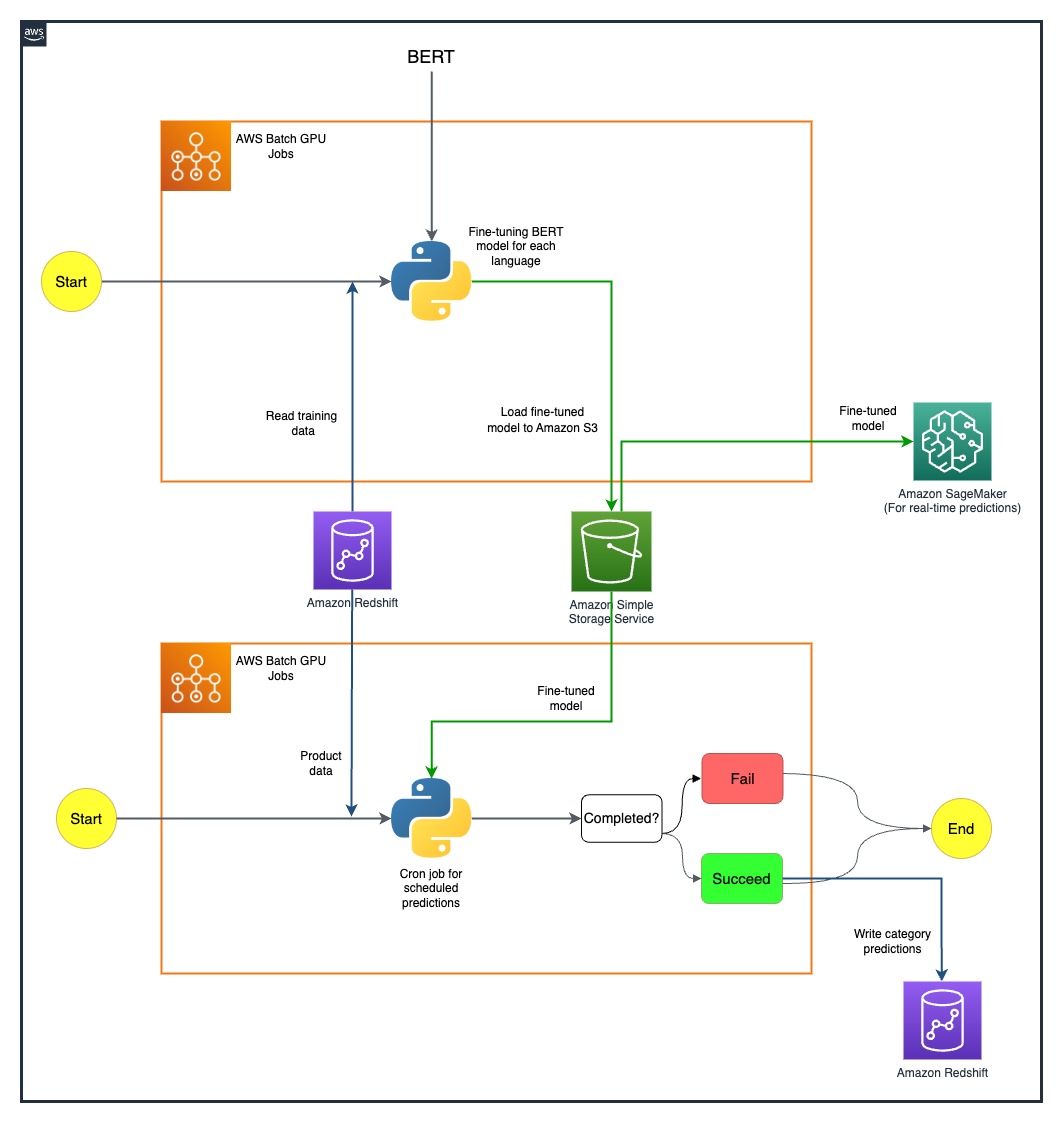

The following diagram shows the solution’s architecture.

The model pipeline is run separately for each country. The architecture includes two AWS Batch GPU cron jobs for each country, running on defined schedules.

We overcame some challenges by strategically deploying SageMaker and AWS Batch GPU resources. The process used to address each difficulty is detailed in the following sections.

Fine-tuning multilingual BERT models with AWS Batch GPU jobs

We sought a solution to support multiple languages for our diverse user base. BERT models were an obvious choice due to their established ability to handle complex natural language tasks effectively. In order to tailor these models to our needs, we harnessed the power of AWS by using single-node GPU instance jobs. This allowed us to fine-tune pre-trained BERT models for each of the seven languages we required support for. Through this method, we ensured high precision in predicting product categories, overcoming any potential language barriers.

Efficient model storage using Amazon S3

Our next step was to address model storage and management. For this, we selected Amazon S3, known for its scalability and security. Storing our fine-tuned BERT models on Amazon S3 enabled us to provide easy access to different teams within our organization, thereby significantly streamlining our deployment process. This was a crucial aspect in achieving agility in our operations and a seamless integration of our ML efforts.

Creating an end-to-end prediction pipeline

An efficient pipeline was required to make the best use of our pre-trained models. We first deployed these models on SageMaker, an action that allowed for real-time predictions with low latency, thereby enhancing our user experience. For larger-scale batch predictions, which were equally vital to our operations, we utilized AWS Batch GPU jobs. This ensured the optimal use of our resources, providing us with a perfect balance of performance and efficiency.

Exploring future possibilities with SageMaker MMEs

As we continue to evolve and seek efficiencies in our ML pipeline, one avenue we are keen to explore is using SageMaker multi-model endpoints (MMEs) for deploying our fine-tuned models. With MMEs, we can potentially streamline the deployment of various fine-tuned models, ensuring efficient model management while also benefiting from the native capabilities of SageMaker like shadow variants, auto scaling, and Amazon CloudWatch integration. This exploration aligns with our continuous pursuit of enhancing our predictive analytics capabilities and providing superior experiences to our customers.

Conclusion

Our successful integration of SageMaker and AWS Batch has not only addressed our specific challenges but also significantly boosted our operational efficiency. Through the implementation of a sophisticated product category prediction pipeline, we are able to empower our commercial teams with data-driven insights, thereby facilitating more effective decision-making.

Our results speak volumes about our approach’s effectiveness. We have achieved an 80% prediction accuracy across all four levels of category granularity, which plays an important role in shaping the product assortments for each country we serve. This level of precision extends our reach beyond language barriers and ensures we cater to our diverse user base with the utmost accuracy.

Moreover, by strategically using scheduled AWS Batch GPU jobs, we’ve been able to reduce our model training durations by 90%. This efficiency has further streamlined our processes and bolstered our operational agility. Efficient model storage using Amazon S3 has played a critical role in this achievement, balancing both real-time and batch predictions.

For more information about how to get started building your own ML pipelines with SageMaker, see Amazon SageMaker resources. AWS Batch is an excellent option if you are looking for a low-cost, scalable solution for running batch jobs with low operational overhead. To get started, see Getting Started with AWS Batch.

About the Authors

Nafi Ahmet Turgut finished his master’s degree in Electrical & Electronics Engineering and worked as a graduate research scientist. His focus was building machine learning algorithms to simulate nervous network anomalies. He joined Getir in 2019 and currently works as a Senior Data Science & Analytics Manager. His team is responsible for designing, implementing, and maintaining end-to-end machine learning algorithms and data-driven solutions for Getir.

Nafi Ahmet Turgut finished his master’s degree in Electrical & Electronics Engineering and worked as a graduate research scientist. His focus was building machine learning algorithms to simulate nervous network anomalies. He joined Getir in 2019 and currently works as a Senior Data Science & Analytics Manager. His team is responsible for designing, implementing, and maintaining end-to-end machine learning algorithms and data-driven solutions for Getir.

Hasan Burak Yel received his bachelor’s degree in Electrical & Electronics Engineering at Boğaziçi University. He worked at Turkcell, mainly focused on time series forecasting, data visualization, and network automation. He joined Getir in 2021 and currently works as a Data Science & Analytics Manager with the responsibility of Search, Recommendation, and Growth domains.

Hasan Burak Yel received his bachelor’s degree in Electrical & Electronics Engineering at Boğaziçi University. He worked at Turkcell, mainly focused on time series forecasting, data visualization, and network automation. He joined Getir in 2021 and currently works as a Data Science & Analytics Manager with the responsibility of Search, Recommendation, and Growth domains.

Damla Şentürk received her bachelor’s degree of Computer Engineering at Galatasaray University. She continues her master’s degree of Computer Engineering in Boğaziçi University. She joined Getir in 2022, and has been working as a Data Scientist. She has worked on commercial, supply chain, and discovery-related projects.

Damla Şentürk received her bachelor’s degree of Computer Engineering at Galatasaray University. She continues her master’s degree of Computer Engineering in Boğaziçi University. She joined Getir in 2022, and has been working as a Data Scientist. She has worked on commercial, supply chain, and discovery-related projects.

Esra Kayabalı is a Senior Solutions Architect at AWS, specialized in the analytics domain, including data warehousing, data lakes, big data analytics, batch and real-time data streaming, and data integration. She has 12 years of software development and architecture experience. She is passionate about learning and teaching cloud technologies.

Esra Kayabalı is a Senior Solutions Architect at AWS, specialized in the analytics domain, including data warehousing, data lakes, big data analytics, batch and real-time data streaming, and data integration. She has 12 years of software development and architecture experience. She is passionate about learning and teaching cloud technologies.

Author: Nafi Ahmet Turgut