Imperva optimizes SQL generation from natural language using Amazon Bedrock

We used a large language model (LLM) with query examples to make the search work using the language used by Imperva internal users (business analysts)… Figure 1: Insights search by natural language Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation mo…

This is a guest post co-written with Ori Nakar from Imperva.

Imperva Cloud WAF protects hundreds of thousands of websites against cyber threats and blocks billions of security events every day. Counters and insights based on security events are calculated daily and used by users from multiple departments. Millions of counters are added daily, together with 20 million insights updated daily to spot threat patterns.

Our goal was to improve the user experience of an existing application used to explore the counters and insights data. The data is stored in a data lake and retrieved by SQL using Amazon Athena.

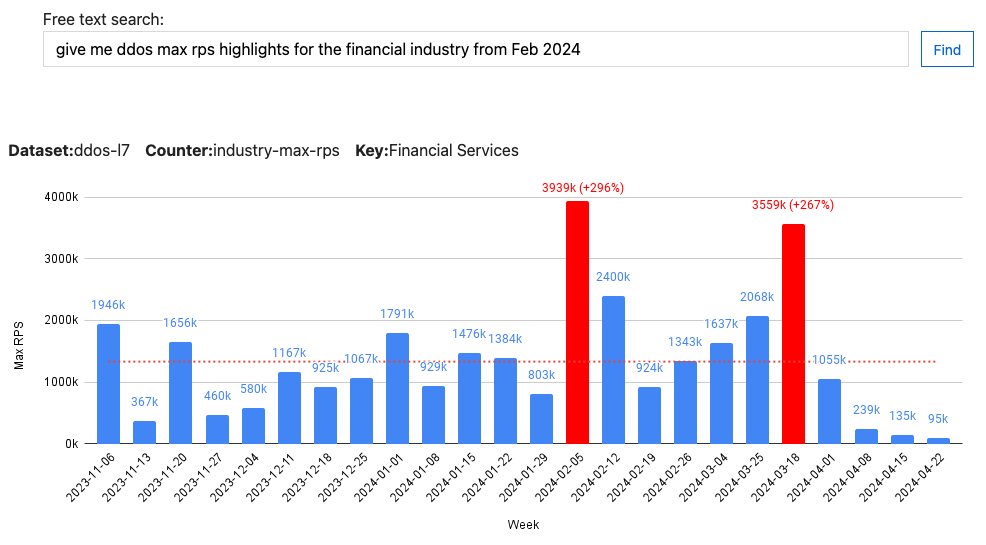

As part of our solution, we replaced multiple search fields with a single free text field. We used a large language model (LLM) with query examples to make the search work using the language used by Imperva internal users (business analysts).

The following figure shows a search query that was translated to SQL and run. The results were later formatted as a chart by the application. We have many types of insights—global, industry, and customer level insights used by multiple departments such as marketing, support, and research. Data was made available to our users through a simplified user experience powered by an LLM.

Figure 1: Insights search by natural language

Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading artificial intelligence (AI) companies such as AI21 Labs, Anthropic, Cohere, Meta, Mistral, Stability AI, and Amazon within a single API, along with a broad set of capabilities you need to build generative AI applications with security, privacy, and responsible AI. Amazon Bedrock Studio is a new single sign-on (SSO)-enabled web interface that provides a way for developers across an organization to experiment with LLMs and other FMs, collaborate on projects, and iterate on generative AI applications. It offers a rapid prototyping environment and streamlines access to multiple FMs and developer tools in Amazon Bedrock.

Read more to learn about the problem, and how we obtained quality results using Amazon Bedrock for our experimentation and deployment.

The problem

Making data accessible to users through applications has always been a challenge. Data is normally stored in databases, and can be queried using the most common query language, SQL. Applications use different UI components to allow users to filter and query the data. There are applications with tens of different filters and other options–all created to make the data accessible.

Querying databases through applications cannot be as flexible as running SQL queries on a known schema. Giving more power to the user comes on account of simple user experience (UX). Natural language can solve this problem—it’s possible to support complex yet readable natural language queries without SQL knowledge. On schema changes, the application UX and code remain the same, or with minor changes, which saves development time and keeps the application user interface (UI) stable for the users.

Constructing SQL queries from natural language isn’t a simple task. SQL queries must be accurate both syntactically and logically. Using an LLM with the right examples can make this task less difficult.

Figure 2: High level database access using an LLM flow

The challenge

An LLM can construct SQL queries based on natural language. The challenge is to assure quality. The user can enter any text, and the application constructs a query based on it. There isn’t an option, like in traditional applications, to cover all options and make sure the application functions correctly. Adding an LLM to an application adds another layer of complexity. The response by the LLM is not deterministic. Examples sent to the LLM are based on the database data, which makes it even harder to control the requests sent to the LLM and assure quality.

The solution: A data science approach

In data science, it’s common to develop a model and fine tune it using experimentation. The idea is to use metrics to compare experiments during development. Experiments might differ from each other in many ways, such as the input sent to the model, the model type, and other parameters. The ability to compare different experiments makes it possible to make progress. It’s possible to know how each change contributes to the model.

A test set is a static set of records that includes a prediction result for each record. Running predictions on the test set records results with the metrics needed to compare experiments. A common metric is the accuracy, which is the percentage of the correct results.

In our case the results generated by the LLM are SQL statements. The SQL statements generated by the LLM are not deterministic and are hard to measure, however running SQL statements on a static test database is deterministic and can be measured. We used a test database and a list of questions with known answers as a test set. It allowed us to run experiments and fine tune our LLM-based application.

Database access using LLM: Question to answer flow

Given a question we defined the following flow. The question is sent through a retrieval-augmented generation (RAG) process, which finds similar documents. Each document holds an example question and information about it. The relevant documents are built as a prompt and sent to the LLM, which builds a SQL statement. This flow is used both for development and application runtime:

Figure 3: Question to answer flow

As an example, consider a database schema with two tables: orders and items. The following figure is a question to SQL example flow:

Figure 4: Question to answer flow example

Database access using LLM: Development process

To develop and fine-tune the application we created the following data sets:

- A static test database: Contains the relevant tables and a sample copy of the data.

- A test set: Includes questions and test database result answers.

- Question to SQL examples: A set with questions and translation to SQL. For some examples returned data is included to allow asking questions about the data, and not only about the schema.

Development of the application is done by adding new questions and updating the different datasets, as shown in the following figure.

Figure 5: Adding a new question

Datasets and other parameter updates are tracked as part of adding new questions and fine-tuning of the application. We used a tracking tool to track information about the experiments such as:

- Parameters such as the number of questions, number of examples, LLM type, RAG search method

- Metrics such as the accuracy and SQL errors rate

- Artifacts such as a list of the wrong results including generated SQL, data returned, and more

Figure 6: Experiment flow

Using a tracking tool, we were able to make progress by comparing experiments. The following figure shows the accuracy and error rate metrics for the different experiments we did:

Figure 7: Accuracy and error rate over time

When there’s a mistake or an error, a drill down to the false results and the experiment details is done to understand the source of the error and fix it.

Experiment and deploy using Amazon Bedrock

Amazon Bedrock is a managed service that offers a choice of high-performing foundation models. You can experiment with and evaluate top FMs for your use case and customize them with your data.

By using Amazon Bedrock, we were able to switch between models and embedding options easily. The following is an example code using the LangChain python library, which allows using different models and embeddings:

Conclusion

We used the same approach used in data science projects to construct SQL queries from natural language. The solution shown can be applied to other LLM-based applications, and not only for constructing SQL. For example, it can be used for API access, building JSON data, and more. The key is to create a test set together with measurable results and progress using experimentation.

Amazon Bedrock lets you use different models and switch between them to find the right one for your use case. You can compare different models, including small ones for better performance and costs. Because Amazon Bedrock is serverless, you don’t have to manage any infrastructure. We were able to test multiple models quickly, and finally integrate and deploy generative AI capabilities into our application.

You can start experimenting with natural language to SQL by running the code samples in this GitHub repository. This workshop is divided into modules that each build on the previous while introducing a new technique to solve this problem. Many of these approaches are based on an existing work from the community and cited accordingly.

About the Authors

Ori Nakar is a Principal cyber-security researcher, a data engineer, and a data scientist at Imperva Threat Research group.

Ori Nakar is a Principal cyber-security researcher, a data engineer, and a data scientist at Imperva Threat Research group.

Eitan Sela is a Generative AI and Machine Learning Specialist Solutions Architect at AWS. He works with AWS customers to provide guidance and technical assistance, helping them build and operate Generative AI and Machine Learning solutions on AWS. In his spare time, Eitan enjoys jogging and reading the latest machine learning articles.

Eitan Sela is a Generative AI and Machine Learning Specialist Solutions Architect at AWS. He works with AWS customers to provide guidance and technical assistance, helping them build and operate Generative AI and Machine Learning solutions on AWS. In his spare time, Eitan enjoys jogging and reading the latest machine learning articles.

Elad Eizner is a Solutions Architect at Amazon Web Services. He works with AWS enterprise customers to help them architect and build solutions in the cloud and achieving their goals.

Elad Eizner is a Solutions Architect at Amazon Web Services. He works with AWS enterprise customers to help them architect and build solutions in the cloud and achieving their goals.

Author: Ori Nakar