Talk to your slide deck using multimodal foundation models on Amazon Bedrock – Part 3

5-7b) model to generate text responses to user questions based on the most similar slide retrieved from the vector database… Then we used Anthropic’s Claude 3 Sonnet to generate answers to user questions based on the most relevant text description retrieved from the vector database… In this p…

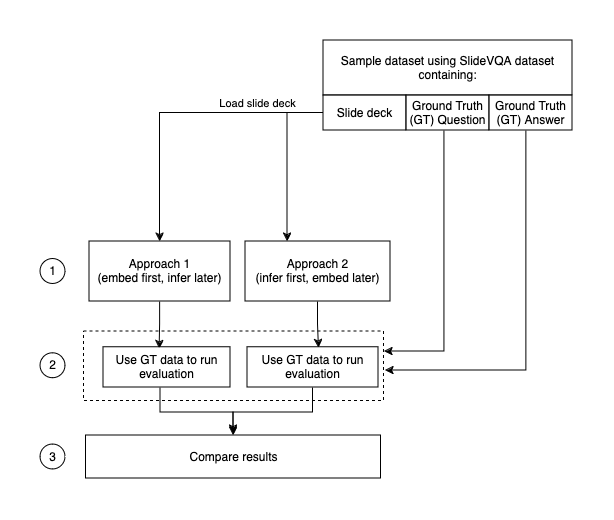

In this series, we share two approaches to gain insights on multimodal data like text, images, and charts. In Part 1, we presented an “embed first, infer later” solution that uses the Amazon Titan Multimodal Embeddings foundation model (FM) to convert individual slides from a slide deck into embeddings. We stored the embeddings in a vector database and then used the Large Language-and-Vision Assistant (LLaVA 1.5-7b) model to generate text responses to user questions based on the most similar slide retrieved from the vector database. Part 1 uses AWS services including Amazon Bedrock, Amazon SageMaker, and Amazon OpenSearch Serverless.

In Part 2, we demonstrated a different approach: “infer first, embed later.” We used Anthropic’s Claude 3 Sonnet on Amazon Bedrock to generate text descriptions for each slide in the slide deck. These descriptions are then converted into text embeddings using the Amazon Titan Text Embeddings model and stored in a vector database. Then we used Anthropic’s Claude 3 Sonnet to generate answers to user questions based on the most relevant text description retrieved from the vector database.

In this post, we evaluate the results from both approaches using ground truth provided by SlideVQA[1], an open source visual question answering dataset. You can test both approaches and evaluate the results to find the best fit for your datasets. The code for this series is available in the GitHub repo.

Comparison of approaches

SlideVQA is a collection of publicly available slide decks, each composed of multiple slides (in JPG format) and questions based on the information in the slide decks. It allows a system to select a set of evidence images and answer the question. We use SlideVQA as the single source of truth to compare the results. It’s important that you follow the Amazon Bedrock data protection policies when using public datasets.

This post follows the process depicted in the following diagram. For more details about the architecture, refer to the solution overview and design in Parts 1 and 2 of the series.

We selected 100 random questions from SlideVQA to create a sample dataset to test solutions from Part 1 and Part 2.

The responses to the questions in the sample dataset are as concise as possible, as shown in the following example:

The responses from large language models (LLMs) are quite verbose:

The following sections briefly discuss the solutions and dive into the evaluation and pricing for each approach.

Approach 1: Embed first, infer later

Slide decks are converted into PDF images, one per slide, and embedded using the Amazon Titan Multimodal Embeddings model, resulting in a vector embedding of 1,024 dimensions. The embeddings are stored in an OpenSearch Serverless index, which serves as the vector store for our Retrieval Augmented Generation (RAG) solution. The embeddings are ingested using an Amazon OpenSearch Ingestion pipeline.

Each question is converted into embeddings using the Amazon Titan Multimodal Embeddings model, and an OpenSearch vector search is performed using these embeddings. We performed a k-nearest neighbor (k-NN) search to retrieve the most relevant embedding matching the question. The metadata of the response from the OpenSearch index contains a path to the image corresponding to the most relevant slide.

The following prompt is created by combining the question and the image path, and is sent to Anthropic’s Claude 3 Sonnet to respond to the question with a concise answer:

We used Anthropic’s Claude 3 Sonnet instead of LLaVA 1.5-7b as mentioned in the solution for Part 1. The approach remains the same, “embed first, infer later,” but the model that compiles the final response is changed for simplicity and comparability between approaches.

A response for each question in the dataset is recorded in JSON format and compared to the ground truth provided by SlideVQA.

This approach retrieved a response for 78% of the questions on a dataset of 100 questions, achieving a 50% accuracy on the final responses.

Approach 2: Infer first, embed later

Slide decks are converted into PDF images, one per slide, and passed to Anthropic’s Claude 3 Sonnet to generate a text description. The description is sent to the Amazon Titan Text Embeddings model to generate vector embeddings with 1,536 dimensions. The embeddings are ingested into an OpenSearch Serverless index using an OpenSearch Ingestion pipeline.

Each question is converted into embeddings using the Amazon Titan Text Embeddings model and an OpenSearch vector search is performed using these embeddings. We performed a k-NN search to retrieve the most relevant embedding matching the question. The metadata of the response from the OpenSearch index contains the image description corresponding to the most relevant slide.

We create a prompt with the question and image description and pass it to Anthropic’s Claude 3 Sonnet to receive a precise answer. The following is the prompt template:

With this approach, we received 44% accuracy on final responses with 75% of the questions retrieving a response out of the 100 questions in the sample dataset.

Analysis of results

In our testing, both approaches produced 50% or less matching results to the questions in the sample dataset. The sample dataset contains a random selection of slide decks covering a wide variety of topics, including retail, healthcare, academic, technology, personal, and travel. Therefore, for a generic question like, “What are examples of tools that can be used?” which lacks additional context, the nearest match could retrieve responses from a variety of topics, leading to inaccurate results, especially when all embeddings are being ingested in the same OpenSearch index. The use of techniques such as hybrid search, pre-filtering based on metadata, and reranking can be used to improve the retrieval accuracy.

One of the solutions is to retrieve more results (increase the k value) and reorder them to keep the most relevant ones; this technique is called reranking. We share additional ways to improve the accuracy of the results later in this post.

The final prompts to Anthropic’s Claude 3 Sonnet in our analysis included instructions to provide a concise answer in as few words as possible to be able to compare with the ground truth. Your responses will depend on your prompts to the LLM.

Pricing

Pricing is dependent on the modality, provider, and model used. For more details, refer to Amazon Bedrock pricing. We use the On-Demand and Batch pricing mode in our analysis, which allow you to use FMs on a pay-as-you-go basis without having to make time-based term commitments. For text-generation models, you are charged for every input token processed and every output token generated. For embeddings models, you are charged for every input token processed.

The following tables show the price per question for each approach. We calculated the average number of input and output tokens based on our sample dataset for the us-east-1 AWS Region; pricing may vary based on your datasets and Region used.

You can use the following tables for guidance. Refer to the Amazon Bedrock pricing website for additional information.

| Approach 1 | |||||||

| Input Tokens | Output Tokens | ||||||

| Model | Description | Price per 1,000 Tokens / Price per Input Image | Number of Tokens | Price | Price per 1,000 Tokens | Number of Tokens | Price |

| Amazon Titan Multimodal Embeddings | Slide/image embedding | $0.00006 | 1 | $0.00000006 | $0.000 | 0 | $0.00000 |

| Amazon Titan Multimodal Embeddings | Question embedding | $0.00080 | 20 | $0.00001600 | $0.000 | 0 | $0.00000 |

| Anthropic’s Claude 3 Sonnet | Final response | $0.00300 | 700 | $0.00210000 | $0.015 | 8 | $0.00012 |

| Cost per input/output | $0.00211606 | $0.00012 | |||||

| Total cost per question | $0.00224 | ||||||

| Approach 2 | |||||||

| Input Tokens | Output Tokens | ||||||

| Model | Description | Price per 1,000 Tokens / Price per Input Image | Number of Tokens | Price | Price per 1,000 Tokens | Number of Tokens | Price |

| Anthropic’s Claude 3 Sonnet | Slide/image description | $0.00300 | 4523 | $0.01356900 | $0.015 | 350 | $0.00525 |

| Amazon Titan Text Embeddings | Slide/image description embedding | $0.00010 | 350 | $0.00003500 | $0.000 | 0 | $0.00000 |

| Amazon Titan Text Embeddings | Question embedding | $0.00010 | 20 | $0.00000200 | $0.000 | 0 | $0.00000 |

| Anthropic’s Claude 3 Sonnet | Final response | $0.00300 | 700 | $0.00210000 | $0.015 | 8 | $0.00012 |

| Cost per input/output | $0.01570600 | $0.00537 | |||||

| Total cost per question | $0.02108 | ||||||

Clean up

To avoid incurring charges, delete any resources from Parts 1 and 2 of the solution. You can do this by deleting the stacks using the AWS CloudFormation console.

Conclusion

In Parts 1 and 2 of this series, we explored ways to use the power of multimodal FMs such as Amazon Titan Multimodal Embeddings, Amazon Titan Text Embeddings, and Anthropic’s Claude 3 Sonnet. In this post, we compared the approaches from an accuracy and pricing perspective.

Code for all parts of the series is available in the GitHub repo. We encourage you to deploy both approaches and explore different Anthropic Claude models available on Amazon Bedrock. You can discover new information and uncover new perspectives using your organization’s slide content with either approach. Compare the two approaches to identify a better workflow for your slide decks.

With generative AI rapidly developing, there are several ways to improve the results and approach the problem. We are exploring performing a hybrid search and adding search filters by extracting entities from the question to improve the results. Part 4 in this series will explore these concepts in detail.

Portions of this code are released under the Apache 2.0 License.

Resources

[1] Tanaka, Ryota & Nishida, Kyosuke & Nishida, Kosuke & Hasegawa, Taku & Saito, Itsumi & Saito, Kuniko. (2023). SlideVQA: A Dataset for Document Visual Question Answering on Multiple Images. Proceedings of the AAAI Conference on Artificial Intelligence. 37. 13636-13645. 10.1609/aaai.v37i11.26598.

About the Authors

Archana Inapudi is a Senior Solutions Architect at AWS, supporting a strategic customer. She has over a decade of cross-industry expertise leading strategic technical initiatives. Archana is an aspiring member of the AI/ML technical field community at AWS. Prior to joining AWS, Archana led a migration from traditional siloed data sources to Hadoop at a healthcare company. She is passionate about using technology to accelerate growth, provide value to customers, and achieve business outcomes.

Archana Inapudi is a Senior Solutions Architect at AWS, supporting a strategic customer. She has over a decade of cross-industry expertise leading strategic technical initiatives. Archana is an aspiring member of the AI/ML technical field community at AWS. Prior to joining AWS, Archana led a migration from traditional siloed data sources to Hadoop at a healthcare company. She is passionate about using technology to accelerate growth, provide value to customers, and achieve business outcomes.

Manju Prasad is a Senior Solutions Architect at Amazon Web Services. She focuses on providing technical guidance in a variety of technical domains, including AI/ML. Prior to joining AWS, she designed and built solutions for companies in the financial services sector and also for a startup. She has worked in all layers of the software stack, ranging from webdev to databases, and has experience in all levels of the software development lifecycle. She is passionate about sharing knowledge and fostering interest in emerging talent.

Manju Prasad is a Senior Solutions Architect at Amazon Web Services. She focuses on providing technical guidance in a variety of technical domains, including AI/ML. Prior to joining AWS, she designed and built solutions for companies in the financial services sector and also for a startup. She has worked in all layers of the software stack, ranging from webdev to databases, and has experience in all levels of the software development lifecycle. She is passionate about sharing knowledge and fostering interest in emerging talent.

Amit Arora is an AI and ML Specialist Architect at Amazon Web Services, helping enterprise customers use cloud-based machine learning services to rapidly scale their innovations. He is also an adjunct lecturer in the MS data science and analytics program at Georgetown University in Washington, D.C.

Amit Arora is an AI and ML Specialist Architect at Amazon Web Services, helping enterprise customers use cloud-based machine learning services to rapidly scale their innovations. He is also an adjunct lecturer in the MS data science and analytics program at Georgetown University in Washington, D.C.

Antara Raisa is an AI and ML Solutions Architect at Amazon Web Services supporting strategic customers based out of Dallas, Texas. She also has previous experience working with large enterprise partners at AWS, where she worked as a Partner Success Solutions Architect for digital-centered customers.

Antara Raisa is an AI and ML Solutions Architect at Amazon Web Services supporting strategic customers based out of Dallas, Texas. She also has previous experience working with large enterprise partners at AWS, where she worked as a Partner Success Solutions Architect for digital-centered customers.

Author: Archana Inapudi