Transform one-on-one customer interactions: Build speech-capable order processing agents with AWS and generative AI

The Amazon Lex bot interprets the customer’s intents and triggers a DialogCodeHook… For this use case, the Amazon Bedrock LLM template will accomplish the following: Validate the customer intent Validate the request Create the order data structure Pass a summary of the order to the …

In today’s landscape of one-on-one customer interactions for placing orders, the prevailing practice continues to rely on human attendants, even in settings like drive-thru coffee shops and fast-food establishments. This traditional approach poses several challenges: it heavily depends on manual processes, struggles to efficiently scale with increasing customer demands, introduces the potential for human errors, and operates within specific hours of availability. Additionally, in competitive markets, businesses adhering solely to manual processes might find it challenging to deliver efficient and competitive service. Despite technological advancements, the human-centric model remains deeply ingrained in order processing, leading to these limitations.

The prospect of utilizing technology for one-on-one order processing assistance has been available for some time. However, existing solutions can often fall into two categories: rule-based systems that demand substantial time and effort for setup and upkeep, or rigid systems that lack the flexibility required for human-like interactions with customers. As a result, businesses and organizations face challenges in swiftly and efficiently implementing such solutions. Fortunately, with the advent of generative AI and large language models (LLMs), it’s now possible to create automated systems that can handle natural language efficiently, and with an accelerated on-ramping timeline.

Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading AI companies like AI21 Labs, Anthropic, Cohere, Meta, Stability AI, and Amazon via a single API, along with a broad set of capabilities you need to build generative AI applications with security, privacy, and responsible AI. In addition to Amazon Bedrock, you can use other AWS services like Amazon SageMaker JumpStart and Amazon Lex to create fully automated and easily adaptable generative AI order processing agents.

In this post, we show you how to build a speech-capable order processing agent using Amazon Lex, Amazon Bedrock, and AWS Lambda.

Solution overview

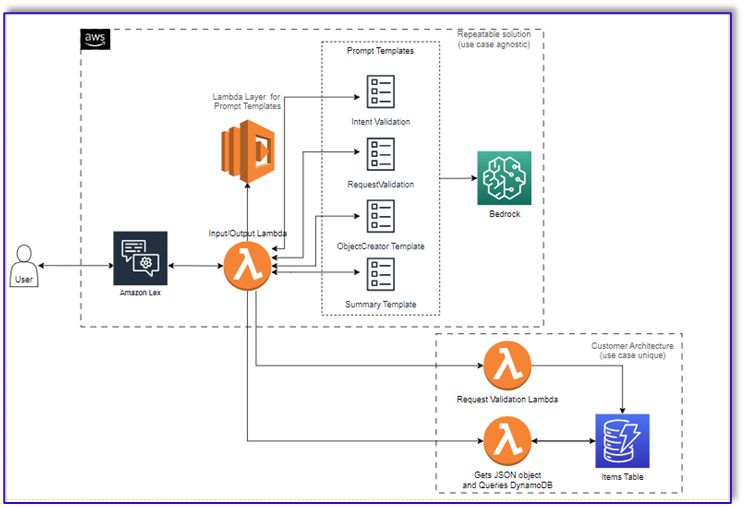

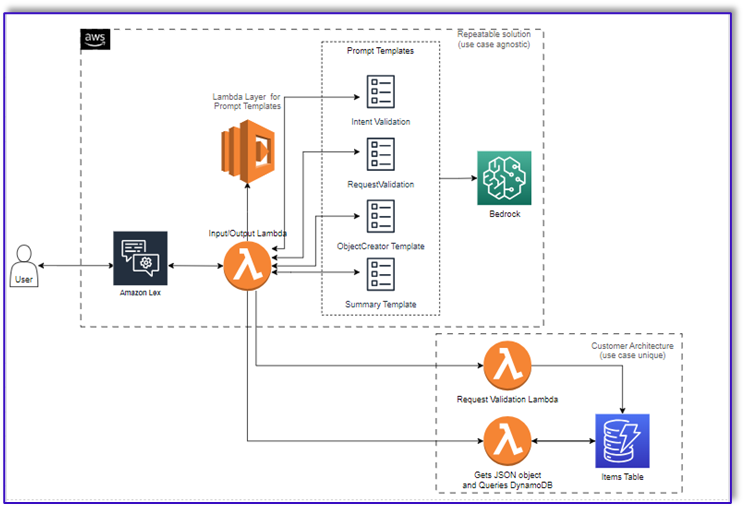

The following diagram illustrates our solution architecture.

The workflow consists of the following steps:

- A customer places the order using Amazon Lex.

- The Amazon Lex bot interprets the customer’s intents and triggers a

DialogCodeHook. - A Lambda function pulls the appropriate prompt template from the Lambda layer and formats model prompts by adding the customer input in the associated prompt template.

- The

RequestValidationprompt verifies the order with the menu item and lets the customer know via Amazon Lex if there’s something they want to order that isn’t part of the menu and will provide recommendations. The prompt also performs a preliminary validation for order completeness. - The

ObjectCreatorprompt converts the natural language requests into a data structure (JSON format). - The customer validator Lambda function verifies the required attributes for the order and confirms if all necessary information is present to process the order.

- A customer Lambda function takes the data structure as an input for processing the order and passes the order total back to the orchestrating Lambda function.

- The orchestrating Lambda function calls the Amazon Bedrock LLM endpoint to generate a final order summary including the order total from the customer database system (for example, Amazon DynamoDB).

- The order summary is communicated back to the customer via Amazon Lex. After the customer confirms the order, the order will be processed.

Prerequisites

This post assumes that you have an active AWS account and familiarity with the following concepts and services:

- Generative AI

- Amazon Bedrock

- Anthropic Claude V2

- Amazon DynamoDB

- AWS Lambda

- Amazon Lex

- Amazon Simple Storage Service (Amazon S3)

Also, in order to access Amazon Bedrock from the Lambda functions, you need to make sure the Lambda runtime has the following libraries:

- boto3>=1.28.57

- awscli>=1.29.57

- botocore>=1.31.57

This can be done with a Lambda layer or by using a specific AMI with the required libraries.

Furthermore, these libraries are required when calling the Amazon Bedrock API from Amazon SageMaker Studio. This can be done by running a cell with the following code:

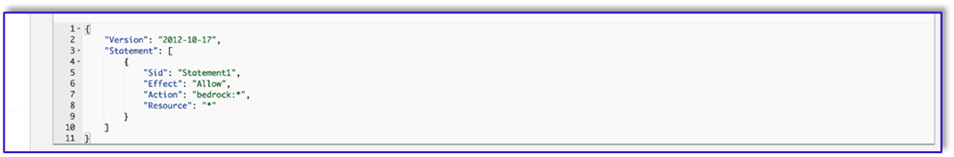

Finally, you create the following policy and later attach it to any role accessing Amazon Bedrock:

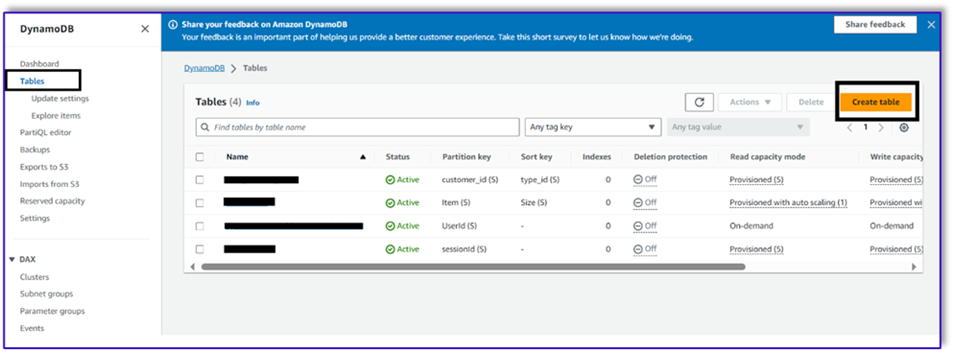

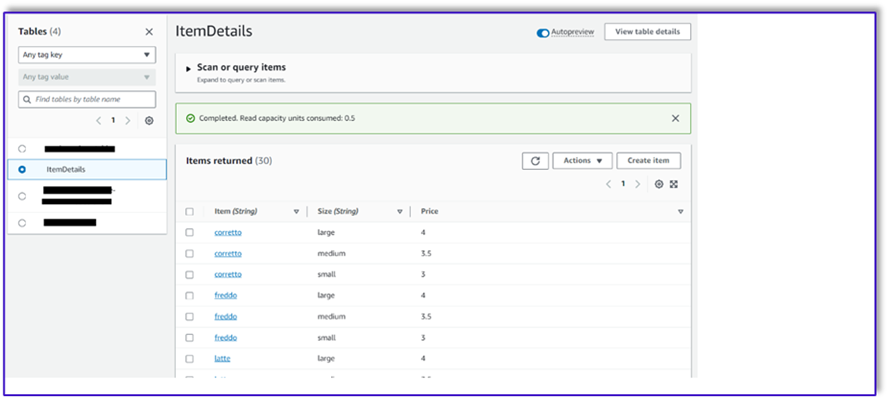

Create a DynamoDB table

In our specific scenario, we’ve created a DynamoDB table as our customer database system, but you could also use Amazon Relational Database Service (Amazon RDS). Complete the following steps to provision your DynamoDB table (or customize the settings as needed for your use case):

- On the DynamoDB console, choose Tables in the navigation pane.

- Choose Create table.

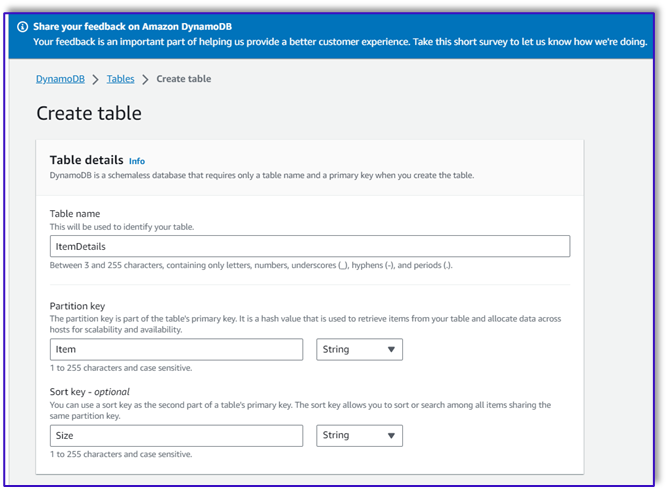

- For Table name, enter a name (for example,

ItemDetails). - For Partition key, enter a key (for this post, we use

Item). - For Sort key, enter a key (for this post, we use

Size). - Choose Create table.

Now you can load the data into the DynamoDB table. For this post, we use a CSV file. You can load the data to the DynamoDB table using Python code in a SageMaker notebook.

First, we need to set up a profile named dev.

- Open a new terminal in SageMaker Studio and run the following command:

This command will prompt you to enter your AWS access key ID, secret access key, default AWS Region, and output format.

- Return to the SageMaker notebook and write a Python code to set up a connection to DynamoDB using the Boto3 library in Python. This code snippet creates a session using a specific AWS profile named dev and then creates a DynamoDB client using that session. The following is the code sample to load the data:

Alternatively, you can use NoSQL Workbench or other tools to quickly load the data to your DynamoDB table.

The following is a screenshot after the sample data is inserted into the table.

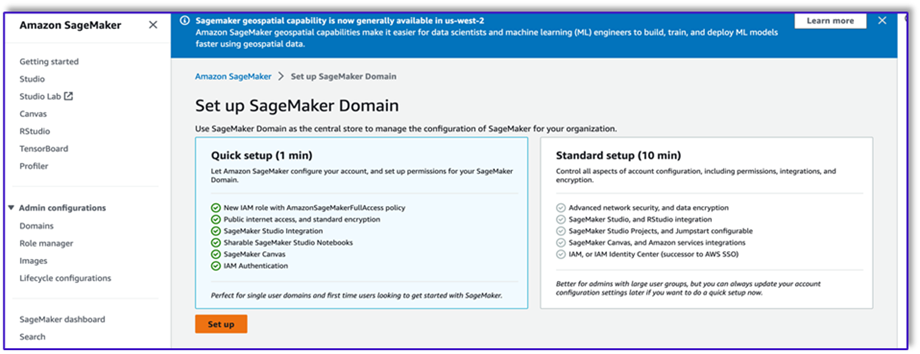

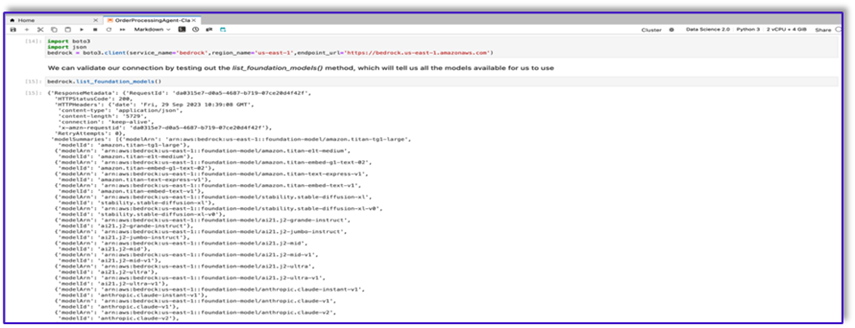

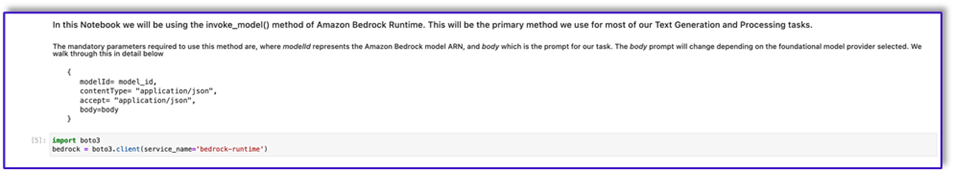

Create templates in a SageMaker notebook using the Amazon Bedrock invocation API

To create our prompt template for this use case, we use Amazon Bedrock. You can access Amazon Bedrock from the AWS Management Console and via API invocations. In our case, we access Amazon Bedrock via API from the convenience of a SageMaker Studio notebook to create not only our prompt template, but our complete API invocation code that we can later use on our Lambda function.

- On the SageMaker console, access an existing SageMaker Studio domain or create a new one to access Amazon Bedrock from a SageMaker notebook.

- After you create the SageMaker domain and user, choose the user and choose Launch and Studio. This will open a JupyterLab environment.

- When the JupyterLab environment is ready, open a new notebook and begin importing the necessary libraries.

There are many FMs available via the Amazon Bedrock Python SDK. In this case, we use Claude V2, a powerful foundational model developed by Anthropic.

The order processing agent needs a few different templates. This can change depending on the use case, but we have designed a general workflow that can apply to multiple settings. For this use case, the Amazon Bedrock LLM template will accomplish the following:

- Validate the customer intent

- Validate the request

- Create the order data structure

- Pass a summary of the order to the customer

- To invoke the model, create a bedrock-runtime object from Boto3.

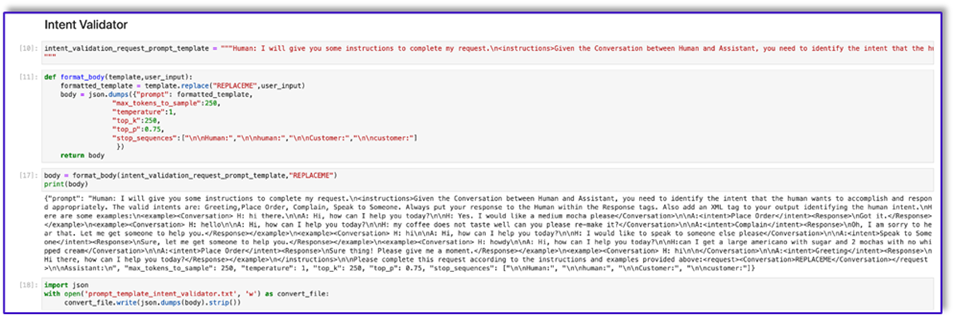

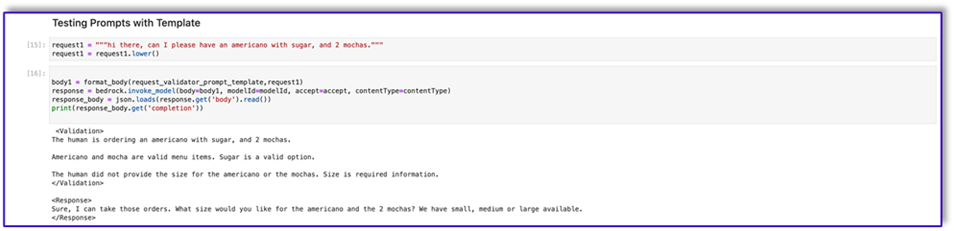

Let’s start by working on the intent validator prompt template. This is an iterative process, but thanks to Anthropic’s prompt engineering guide, you can quickly create a prompt that can accomplish the task.

- Create the first prompt template along with a utility function that will help prepare the body for the API invocations.

The following is the code for prompt_template_intent_validator.txt:

- Save this template into a file in order to upload to Amazon S3 and call from the Lambda function when needed. Save the templates as JSON serialized strings in a text file. The previous screenshot shows the code sample to accomplish this as well.

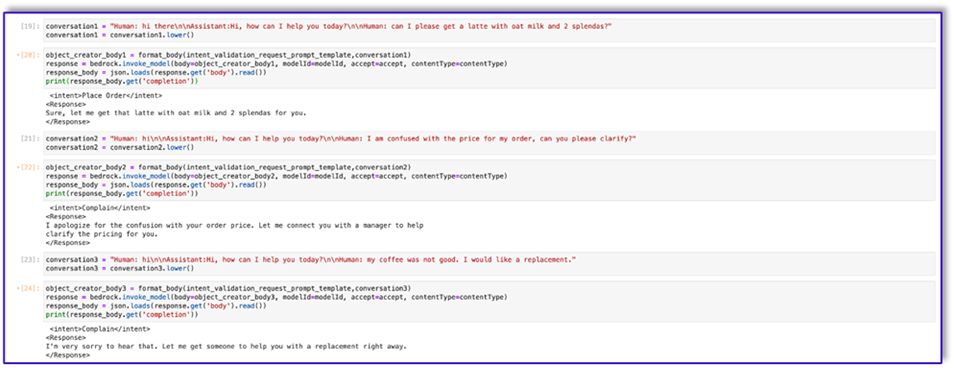

- Repeat the same steps with the other templates.

The following are some screenshots of the other templates and the results when calling Amazon Bedrock with some of them.

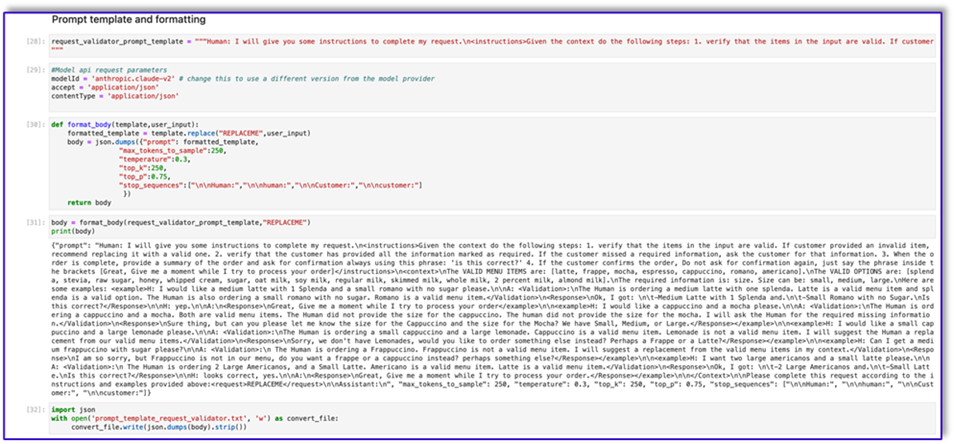

The following is the code for prompt_template_request_validator.txt:

The following is our response from Amazon Bedrock using this template.

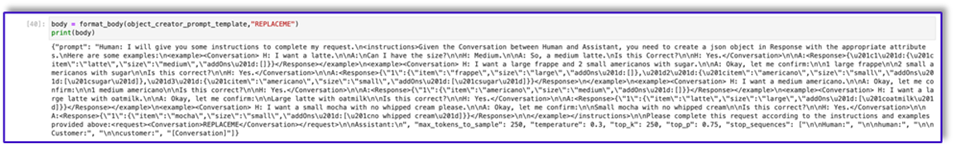

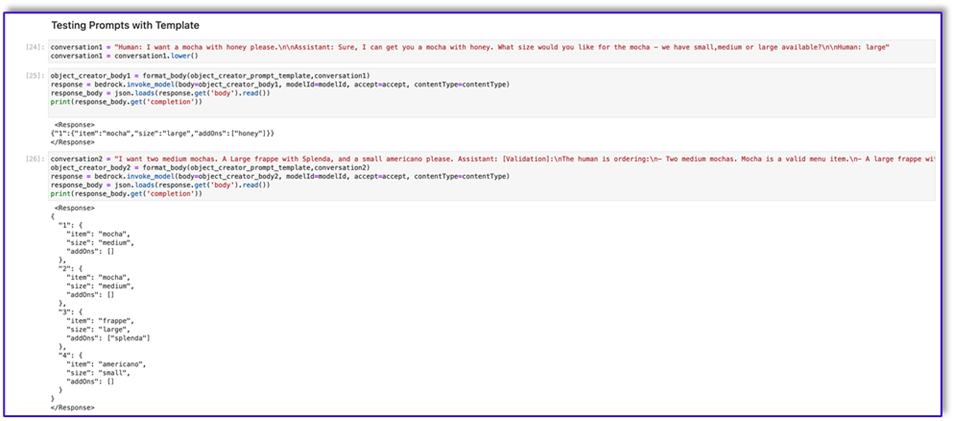

The following is the code for prompt_template_object_creator.txt:

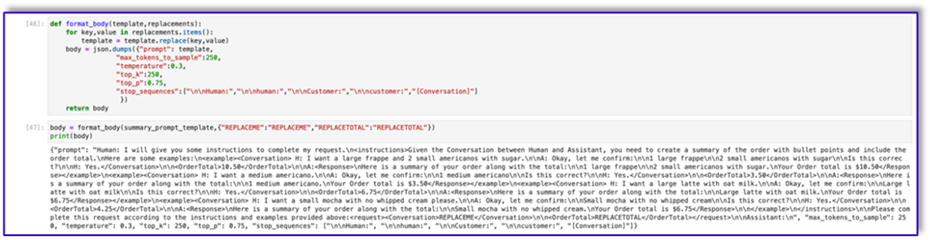

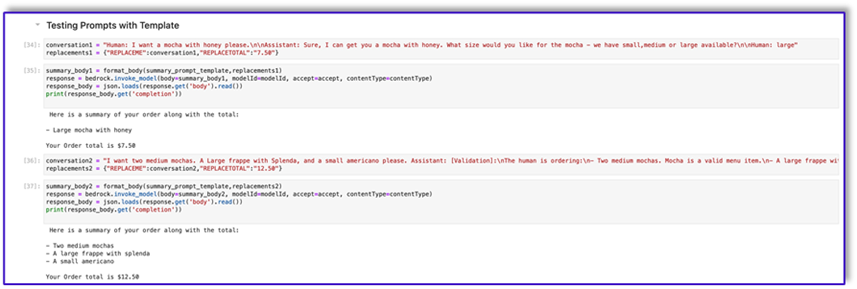

The following is the code for prompt_template_order_summary.txt:

As you can see, we have used our prompt templates to validate menu items, identify missing required information, create a data structure, and summarize the order. The foundational models available on Amazon Bedrock are very powerful, so you could accomplish even more tasks via these templates.

You have completed engineering the prompts and saved the templates to text files. You can now begin creating the Amazon Lex bot and the associated Lambda functions.

Create a Lambda layer with the prompt templates

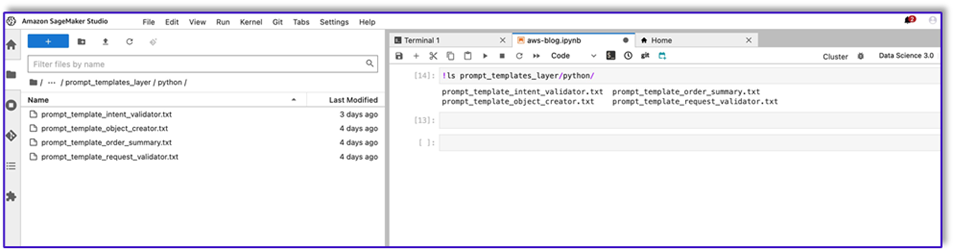

Complete the following steps to create your Lambda layer:

- In SageMaker Studio, create a new folder with a subfolder named

python. - Copy your prompt files to the

pythonfolder.

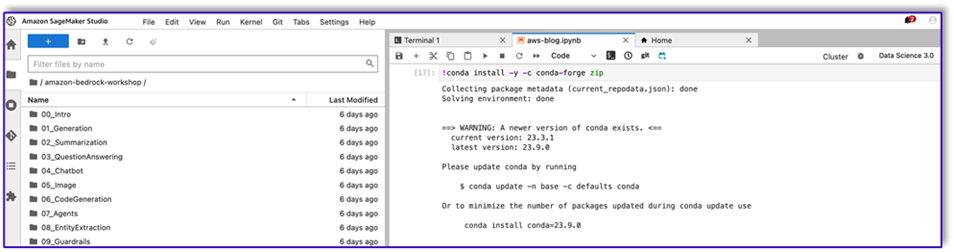

- You can add the ZIP library to your notebook instance by running the following command.

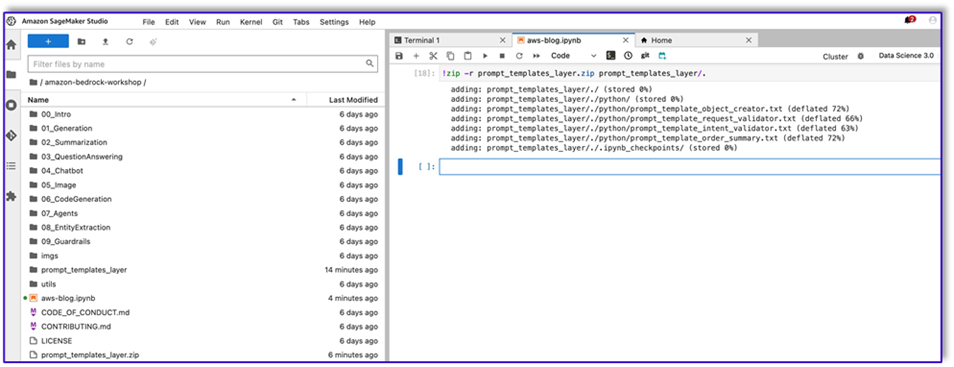

- Now, run the following command to create the ZIP file for uploading to the Lambda layer.

- After you create the ZIP file, you can download the file. Go to Lambda, create a new layer by uploading the file directly or by uploading to Amazon S3 first.

- Then attach this new layer to the orchestration Lambda function.

Now your prompt template files are locally stored in your Lambda runtime environment. This will speed up the process during your bot runs.

Create a Lambda layer with the required libraries

Complete the following steps to create your Lambda layer with the required librarues:

- Open an AWS Cloud9 instance environment, create a folder with a subfolder called

python. - Open a terminal inside the

pythonfolder. - Run the following commands from the terminal:

- Run

cd ..and position yourself inside your new folder where you also have thepythonsubfolder. - Run the following command:

- After you create the ZIP file, you can download the file. Go to Lambda, create a new layer by uploading the file directly or by uploading to Amazon S3 first.

- Then attach this new layer to the orchestration Lambda function.

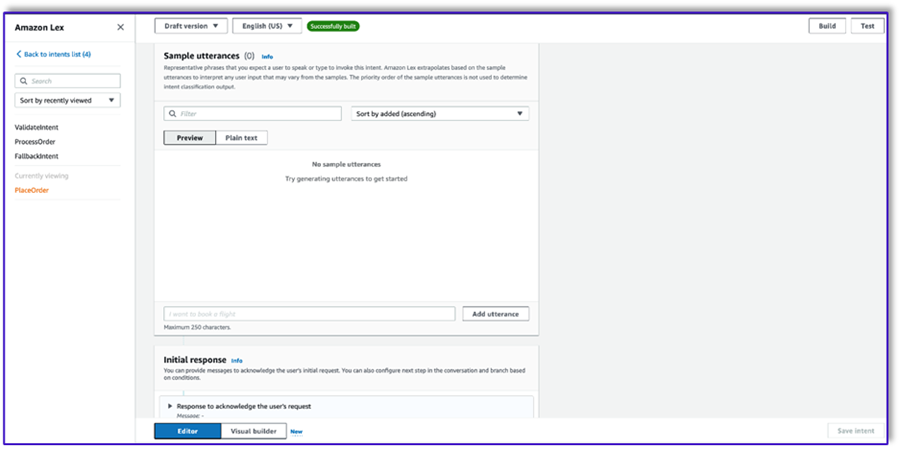

Create the bot in Amazon Lex v2

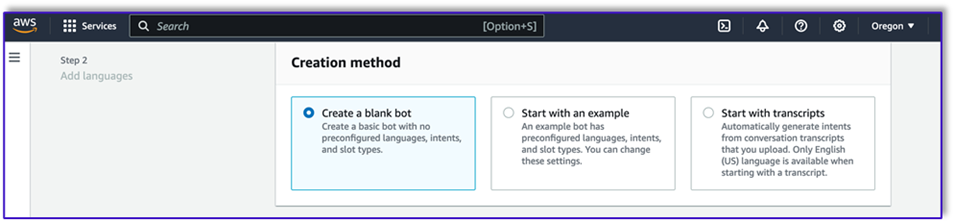

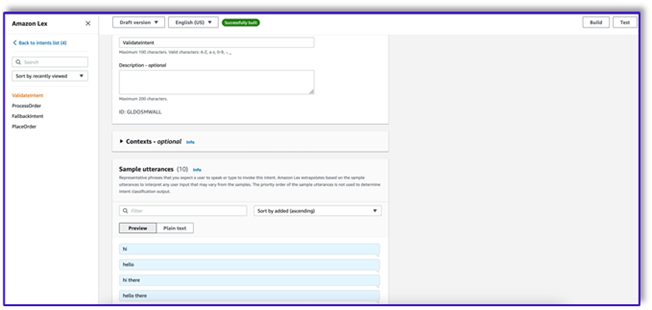

For this use case, we build an Amazon Lex bot that can provide an input/output interface for the architecture in order to call Amazon Bedrock using voice or text from any interface. Because the LLM will handle the conversation piece of this order processing agent, and Lambda will orchestrate the workflow, you can create a bot with three intents and no slots.

- On the Amazon Lex console, create a new bot with the method Create a blank bot.

Now you can add an intent with any appropriate initial utterance for the end-users to start the conversation with the bot. We use simple greetings and add an initial bot response so end-users can provide their requests. When creating the bot, make sure to use a Lambda code hook with the intents; this will trigger a Lambda function that will orchestrate the workflow between the customer, Amazon Lex, and the LLM.

- Add your first intent, which triggers the workflow and uses the intent validation prompt template to call Amazon Bedrock and identify what the customer is trying to accomplish. Add a few simple utterances for end-users to start conversation.

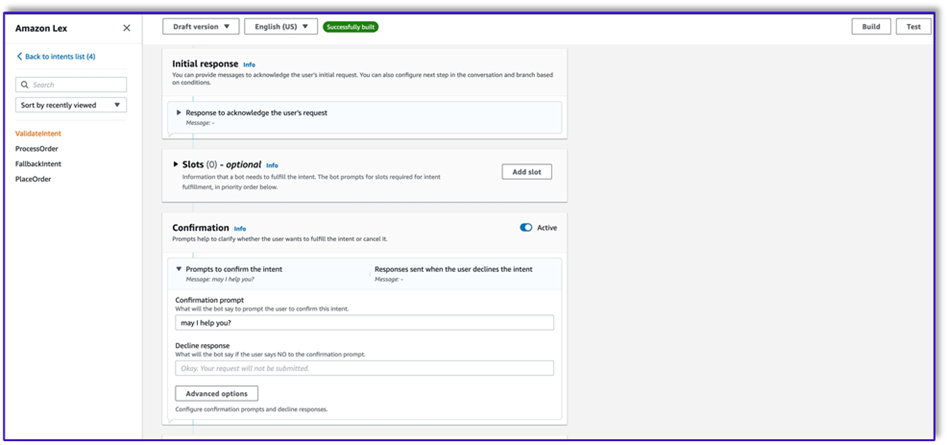

You don’t need to use any slots or initial reading in any of the bot intents. In fact, you don’t need to add utterances to the second or third intents. That is because the LLM will guide Lambda throughout the process.

- Add a confirmation prompt. You can customize this message in the Lambda function later.

- Under Code hooks, select Use a Lambda function for initialization and validation.

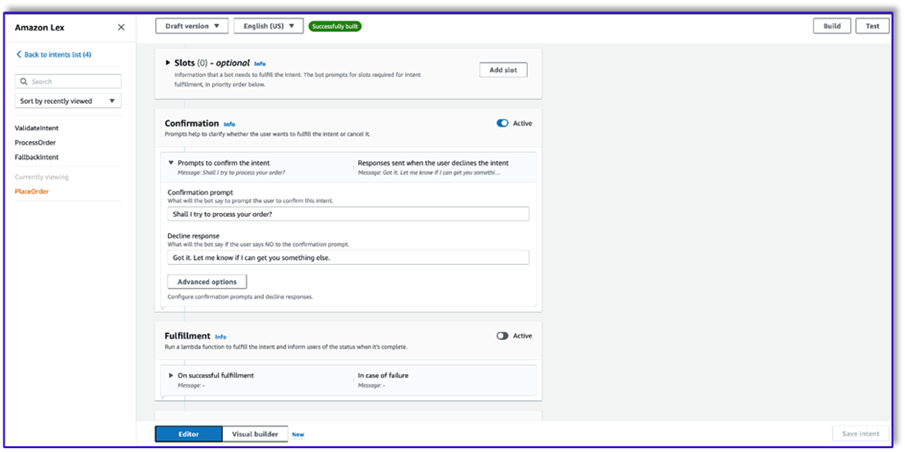

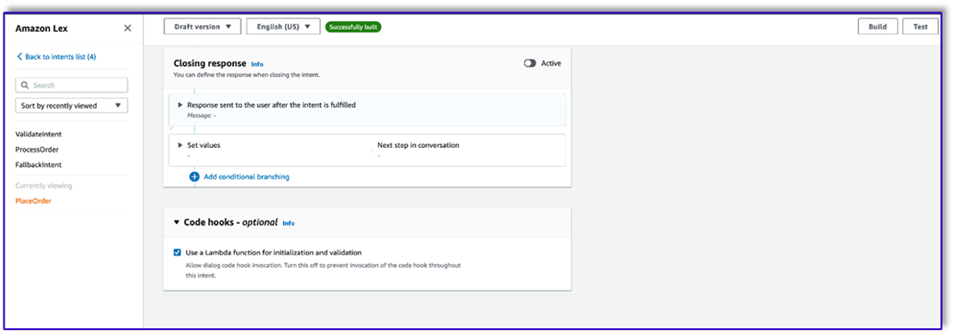

- Create a second intent with no utterance and no initial response. This is the

PlaceOrderintent.

When the LLM identifies that the customer is trying to place an order, the Lambda function will trigger this intent and validate the customer request against the menu, and make sure that no required information is missing. Remember that all of this is on the prompt templates, so you can adapt this workflow for any use case by changing the prompt templates.

- Don’t add any slots, but add a confirmation prompt and decline response.

- Select Use a Lambda function for initialization and validation.

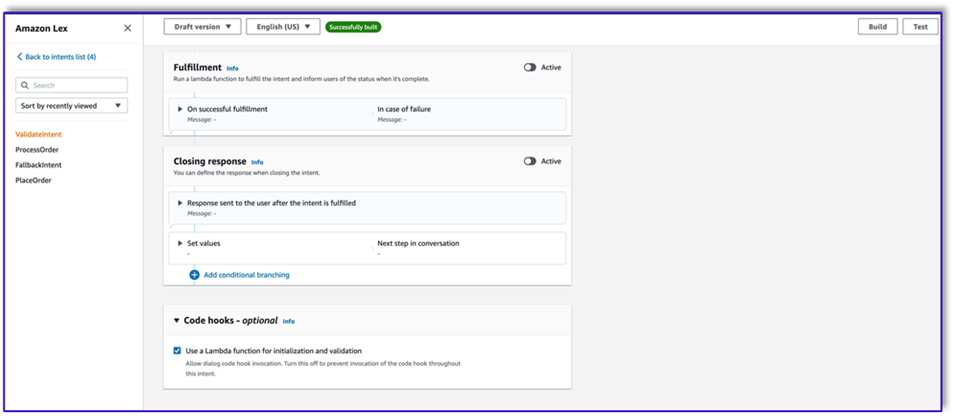

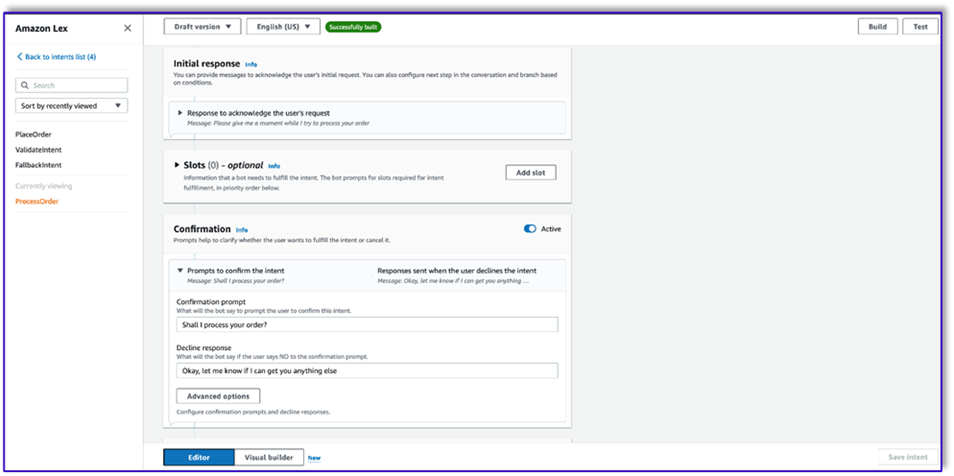

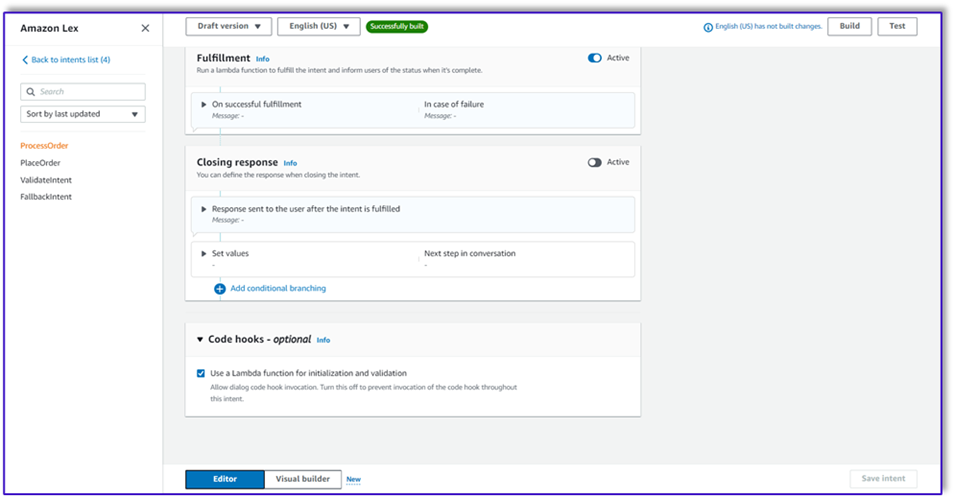

- Create a third intent named

ProcessOrderwith no sample utterances and no slots. - Add an initial response, a confirmation prompt, and a decline response.

After the LLM has validated the customer request, the Lambda function triggers the third and last intent to process the order. Here, Lambda will use the object creator template to generate the order JSON data structure to query the DynamoDB table, and then use the order summary template to summarize the whole order along with the total so Amazon Lex can pass it to the customer.

- Select Use a Lambda function for initialization and validation. This can use any Lambda function to process the order after the customer has given the final confirmation.

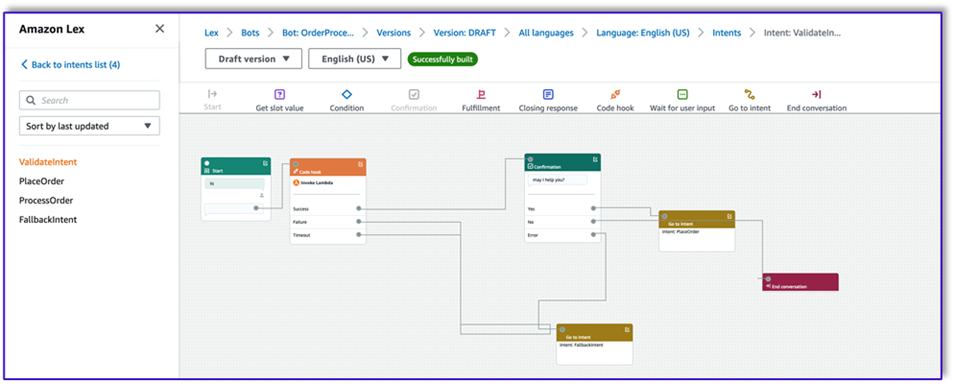

- After you create all three intents, go to the Visual builder for the

ValidateIntent, add a go-to intent step, and connect the output of the positive confirmation to that step. - After you add the go-to intent, edit it and choose the PlaceOrder intent as the intent name.

- Similarly, to go the Visual builder for the

PlaceOrderintent and connect the output of the positive confirmation to theProcessOrdergo-to intent. No editing is required for theProcessOrderintent. - You now need to create the Lambda function that orchestrates Amazon Lex and calls the DynamoDB table, as detailed in the following section.

Create a Lambda function to orchestrate the Amazon Lex bot

You can now build the Lambda function that orchestrates the Amazon Lex bot and workflow. Complete the following steps:

- Create a Lambda function with the standard execution policy and let Lambda create a role for you.

- In the code window of your function, add a few utility functions that will help: format the prompts by adding the lex context to the template, call the Amazon Bedrock LLM API, extract the desired text from the responses, and more. See the following code:

- Attach the Lambda layer you created earlier to this function.

- Additionally, attach the layer to the prompt templates you created.

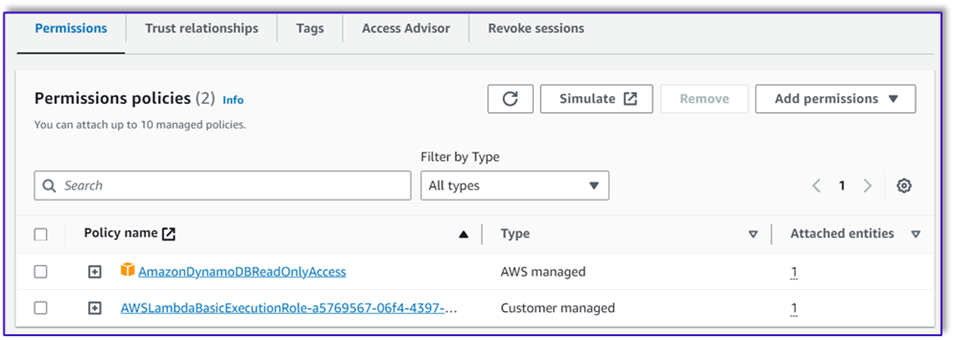

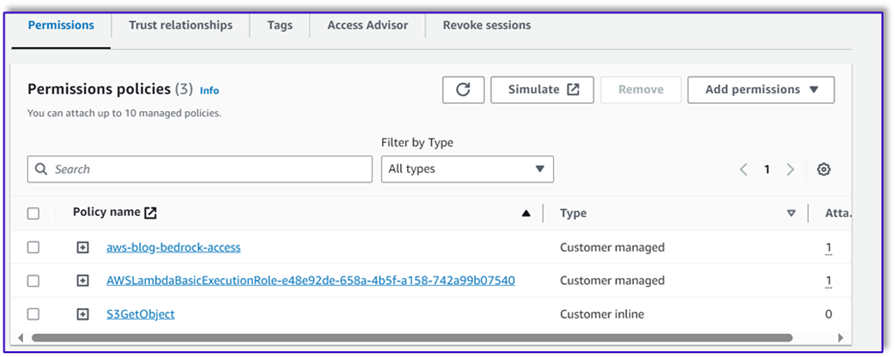

- In the Lambda execution role, attach the policy to access Amazon Bedrock, which was created earlier.

The Lambda execution role should have the following permissions.

Attach the Orchestration Lambda function to the Amazon Lex bot

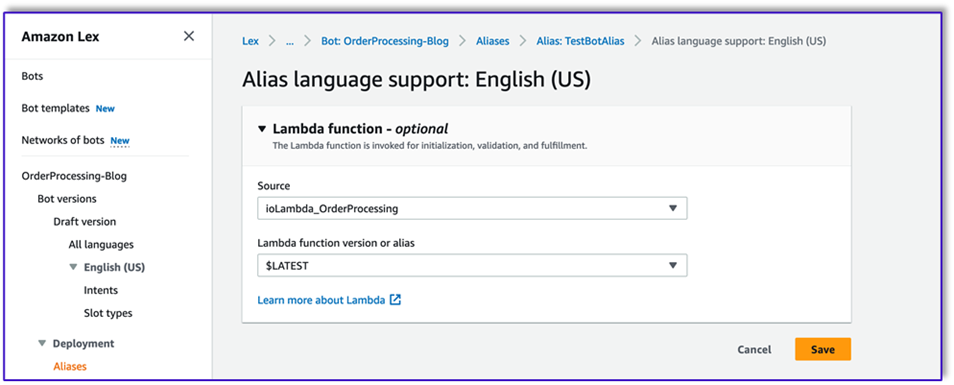

- After you create the function in the previous section, return to the Amazon Lex console and navigate to your bot.

- Under Languages in the navigation pane, choose English.

- For Source, choose your order processing bot.

- For Lambda function version or alias, choose $LATEST.

- Choose Save.

Create assisting Lambda functions

Complete the following steps to create additional Lambda functions:

- Create a Lambda function to query the DynamoDB table that you created earlier:

- Navigate to the Configuration tab in the Lambda function and choose Permissions.

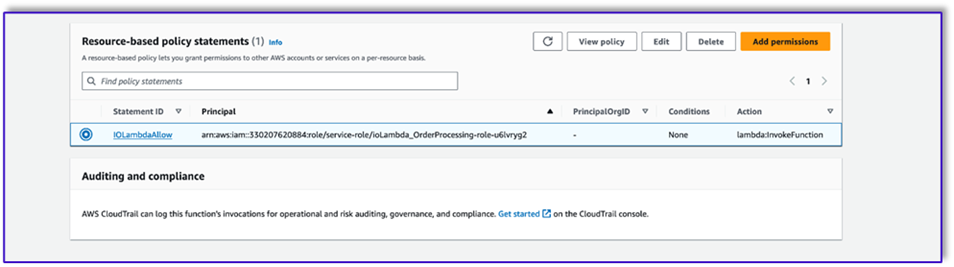

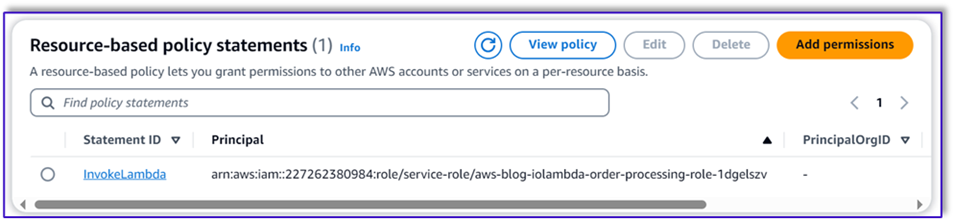

- Attach a resource-based policy statement allowing the order processing Lambda function to invoke this function.

- Navigate to the IAM execution role for this Lambda function and add a policy to access the DynamoDB table.

- Create another Lambda function to validate if all required attributes were passed from the customer. In the following example, we validate if the size attribute is captured for an order:

- Navigate to the Configuration tab in the Lambda function and choose Permissions.

- Attach a resource-based policy statement allowing the order processing Lambda function to invoke this function.

Test the solution

Now we can test the solution with example orders that customers place via Amazon Lex.

For our first example, the customer asked for a frappuccino, which is not on the menu. The model validates with the help of order validator template and suggests some recommendations based on the menu. After the customer confirms their order, they are notified of the order total and order summary. The order will be processed based on the customer’s final confirmation.

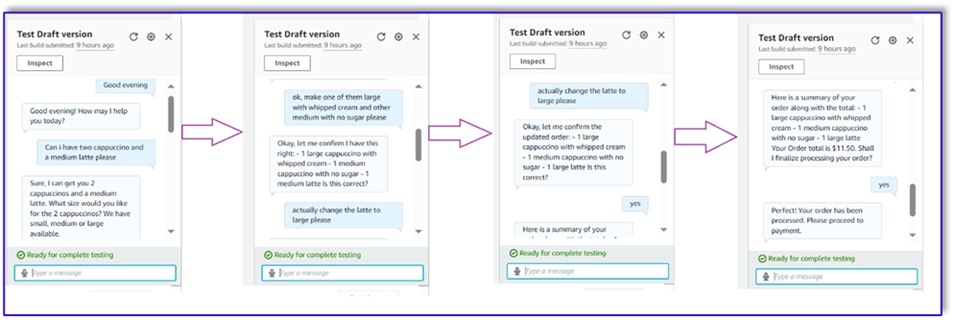

In our next example, the customer is ordering for large cappuccino and then modifying the size from large to medium. The model captures all necessary changes and requests the customer to confirm the order. The model presents the order total and order summary, and processes the order based on the customer’s final confirmation.

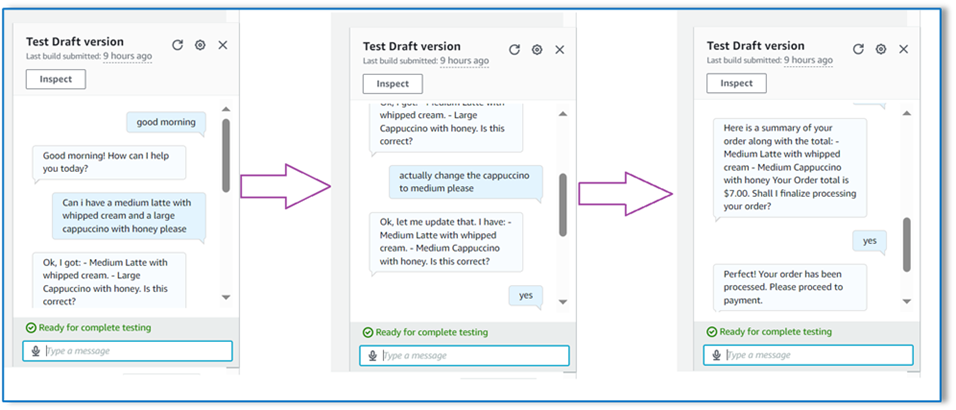

For our final example, the customer placed an order for multiple items and the size is missing for a couple of items. The model and Lambda function will verify if all required attributes are present to process the order and then ask the customer to provide the missing information. After the customer provides the missing information (in this case, the size of the coffee), they’re shown the order total and order summary. The order will be processed based on the customer’s final confirmation.

LLM limitations

LLM outputs are stochastic by nature, which means that the results from our LLM can vary in format, or even in the form of untruthful content (hallucinations). Therefore, developers need to rely on a good error handling logic throughout their code in order to handle these scenarios and avoid a degraded end-user experience.

Clean up

If you no longer need this solution, you can delete the following resources:

- Lambda functions

- Amazon Lex box

- DynamoDB table

- S3 bucket

Additionally, shut down the SageMaker Studio instance if the application is no longer required.

Cost assessment

For pricing information for the main services used by this solution, see the following:

- Amazon Bedrock Pricing

- Amazon DynamoDB Pricing

- AWS Lambda Pricing

- Amazon Lex Pricing

- Amazon S3 Pricing

Note that you can use Claude v2 without the need for provisioning, so overall costs remain at a minimum. To further reduce costs, you can configure the DynamoDB table with the on-demand setting.

Conclusion

This post demonstrated how to build a speech-enabled AI order processing agent using Amazon Lex, Amazon Bedrock, and other AWS services. We showed how prompt engineering with a powerful generative AI model like Claude can enable robust natural language understanding and conversation flows for order processing without the need for extensive training data.

The solution architecture uses serverless components like Lambda, Amazon S3, and DynamoDB to enable a flexible and scalable implementation. Storing the prompt templates in Amazon S3 allows you to customize the solution for different use cases.

Next steps could include expanding the agent’s capabilities to handle a wider range of customer requests and edge cases. The prompt templates provide a way to iteratively improve the agent’s skills. Additional customizations could involve integrating the order data with backend systems like inventory, CRM, or POS. Lastly, the agent could be made available across various customer touchpoints like mobile apps, drive-thru, kiosks, and more using the multi-channel capabilities of Amazon Lex.

To learn more, refer to the following related resources:

- Deploying and managing multi-channel bots:

- Prompt engineering for Claude and other models:

- Serverless architectural patterns for scalable AI assistants:

About the Authors

Moumita Dutta is a Partner Solution Architect at Amazon Web Services. In her role, she collaborates closely with partners to develop scalable and reusable assets that streamline cloud deployments and enhance operational efficiency. She is a member of AI/ML community and a Generative AI expert at AWS. In her leisure, she enjoys gardening and cycling.

Moumita Dutta is a Partner Solution Architect at Amazon Web Services. In her role, she collaborates closely with partners to develop scalable and reusable assets that streamline cloud deployments and enhance operational efficiency. She is a member of AI/ML community and a Generative AI expert at AWS. In her leisure, she enjoys gardening and cycling.

Fernando Lammoglia is a Partner Solutions Architect at Amazon Web Services, working closely with AWS partners in spearheading the development and adoption of cutting-edge AI solutions across business units. A strategic leader with expertise in cloud architecture, generative AI, machine learning, and data analytics. He specializes in executing go-to-market strategies and delivering impactful AI solutions aligned with organizational goals. On his free time he loves to spend time with his family and travel to other countries.

Fernando Lammoglia is a Partner Solutions Architect at Amazon Web Services, working closely with AWS partners in spearheading the development and adoption of cutting-edge AI solutions across business units. A strategic leader with expertise in cloud architecture, generative AI, machine learning, and data analytics. He specializes in executing go-to-market strategies and delivering impactful AI solutions aligned with organizational goals. On his free time he loves to spend time with his family and travel to other countries.

Mitul Patel is a Senior Solution Architect at Amazon Web Services. In his role as a cloud technology enabler, he works with customers to understand their goals and challenges, and provides prescriptive guidance to achieve their objective with AWS offerings. He is a member of AI/ML community and a Generative AI ambassador at AWS. In his free time, he enjoys hiking and playing soccer.

Mitul Patel is a Senior Solution Architect at Amazon Web Services. In his role as a cloud technology enabler, he works with customers to understand their goals and challenges, and provides prescriptive guidance to achieve their objective with AWS offerings. He is a member of AI/ML community and a Generative AI ambassador at AWS. In his free time, he enjoys hiking and playing soccer.

Author: Moumita Dutta