Use Amazon Bedrock to generate, evaluate, and understand code in your software development pipeline

Generative artificial intelligence (AI) models have opened up new possibilities for automating and enhancing software development workflows… Specifically, the emergent capability for generative models to produce code based on natural language prompts has opened many doors to how developers and Dev…

Generative artificial intelligence (AI) models have opened up new possibilities for automating and enhancing software development workflows. Specifically, the emergent capability for generative models to produce code based on natural language prompts has opened many doors to how developers and DevOps professionals approach their work and improve their efficiency. In this post, we provide an overview of how to take advantage of the advancements of large language models (LLMs) using Amazon Bedrock to assist developers at various stages of the software development lifecycle (SDLC).

Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading AI companies like AI21 Labs, Anthropic, Cohere, Meta, Mistral AI, Stability AI, and Amazon through a single API, along with a broad set of capabilities to build generative AI applications with security, privacy, and responsible AI.

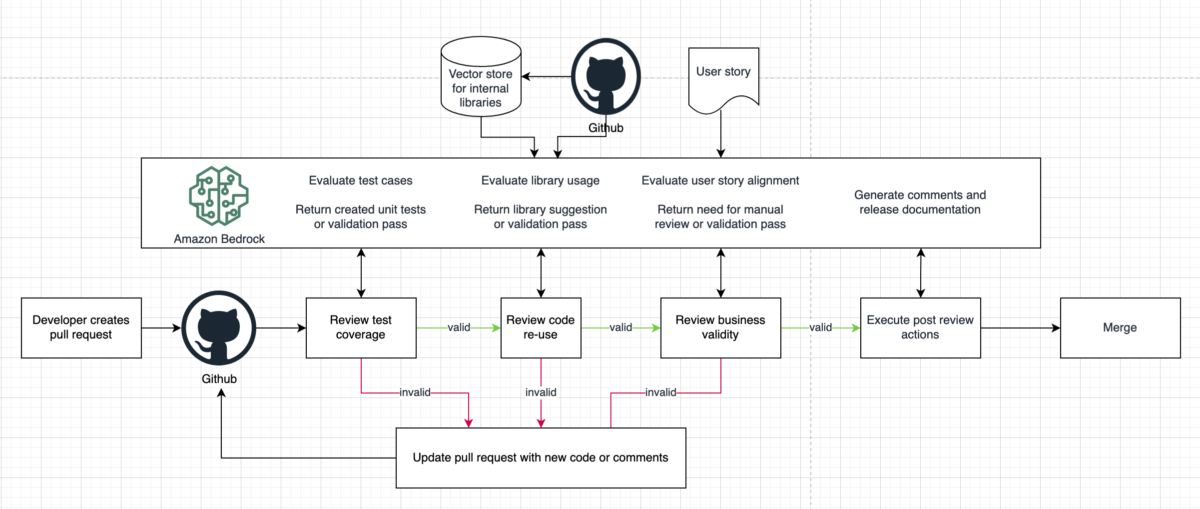

The following process architecture proposes an example SDLC flow that incorporates generative AI in key areas to improve the efficiency and speed of development.

The intent of this post is to focus on how developers can create their own systems to augment, write, and audit code by using models within Amazon Bedrock instead of relying on out-of-the-box coding assistants. We discuss the following topics:

- A coding assistant use case to help developers write code faster by providing suggestions

- How to use the code understanding capabilities of LLMs to surface insights and recommendations

- An automated application generation use case to generate functioning code and automatically deploy changes into a working environment

Considerations

It’s important to consider some technical options when choosing your model and approach to implementing this functionality at each step. One such option is the base model to use for the task. With each model having been trained on a different corpus of data, there will inherently be different task performance per model. Anthropic’s Claude 3 on Amazon Bedrock models write code effectively out of the box in many common coding languages, for example, whereas others may not be able to reach that performance without further customization. Customization, however, is another technical choice to make. For instance, if your use case includes a less common language or framework, customizing the model through fine-tuning or using Retrieval Augmented Generation (RAG) may be necessary to achieve production-quality performance, but involves more complexity and engineering effort to implement effectively.

There is an abundance of literature breaking down these trade-offs; for this post, we are just describing what should be explored in its own right. We are simply laying the context that goes into the builder’s initial steps in implementing their generative AI-powered SDLC journey.

Coding assistant

Coding assistants are a very popular use case, with an abundance of examples from which to choose. AWS offers several services that can be applied to assist developers, either through in-line completion from tools like Amazon CodeWhisperer, or to be interacted with via natural language using Amazon Q. Amazon Q for builders has several implementations of this functionality, such as:

- Amazon Q AWS expert interface

- Amazon Q Developer in IDEs

- Amazon EC2 instance type selection

- Generative SQL for Amazon Redshift Query Editor

In nearly all the use cases described, there can be an integration with the chat interface and assistants. The use cases here are focused on more direct code generation use cases using natural language prompts. This is not to be confused with in-line generation tools that focus on autocompleting a coding task.

The key benefit of an assistant over in-line generation is that you can start new projects based on simple descriptions. For instance, you can describe that you want a serverless website that will allow users to post in blog fashion, and Amazon Q can start building the project by providing sample code and making recommendations on which frameworks to use to do this. This natural language entry point can give you a template and framework to operate within so you can spend more time on the differentiating logic of your application rather than the setup of repeatable and commoditized components.

Code understanding

It’s common for a company that begins to experiment with generative AI to augment the productivity of their individual developers to then use LLMs to infer meaning and functionality of code to improve the reliability, efficiency, security, and speed of the development process. Code understanding by humans is a central part of the SDLC: creating documentation, performing code reviews, and applying best practices. Onboarding new developers can be a challenge even for mature teams. Instead of a more senior developer taking time to respond to questions, an LLM with awareness of the code base and the team’s coding standards could be used to explain sections of code and design decisions to the new team member. The onboarding developer has everything they need with a rapid response time and the senior developer can focus on building. In addition to user-facing behaviors, this same mechanism can be repurposed to work completely behind the scenes to augment existing continuous integration and continuous delivery (CI/CD) processes as an additional reviewer.

For instance, you can use prompt engineering techniques to guide and automate the application of coding standards, or include the existing code base as referential material to use custom APIs. You can also take proactive measures by prefixing each prompt with a reminder to follow the coding standards and make a call to get them from document storage, passing them to the model as context with the prompt. As a retroactive measure, you can add a step during the review process to check the written code against the standards to enforce adherence, similar to how a team code review would work. For example, let’s say that one of the team’s standards is to reuse components. During the review step, the model can read over a new code submission, note that the component already exists in the code base, and suggest to the reviewer to reuse the existing component instead of recreating it.

The following diagram illustrates this type of workflow.

Application generation

You can extend the concepts from the use cases described in this post to create a full application generation implementation. In the traditional SDLC, a human creates a set of requirements, makes a design for the application, writes some code to implement that design, builds tests, and receives feedback on the system from external sources or people, and then the process repeats. The bottleneck in this cycle typically comes at the implementation and testing phases. An application builder needs to have substantive technical skills to write code effectively, and there are typically numerous iterations required to debug and perfect code—even for the most skilled builders. In addition, a foundational knowledge of a company’s existing code base, APIs, and IP are fundamental to implementing an effective solution, which can take humans a long time to learn. This can slow down the time to innovation for new teammates or teams with technical skills gaps. As mentioned earlier, if models can be used with the capability to both create and interpret code, pipelines can be created that perform the developer iterations of the SDLC by feeding outputs of the model back in as input.

The following diagram illustrates this type of workflow.

For example, you can use natural language to ask a model to write an application that prints all the prime numbers between 1–100. It returns a block of code that can be run with applicable tests defined. If the program doesn’t run or some tests fail, the error and failing code can be fed back into the model, asking it to diagnose the problem and suggest a solution. The next step in the pipeline would be to take the original code, along with the diagnosis and suggested solution, and stitch the code snippets together to form a new program. The SDLC restarts in the testing phase to get new results, and either iterates again or a working application is produced. With this basic framework, an increasing number of components can be added in the same manner as in a traditional human-based workflow. This modular approach can be continuously improved until there is a robust and powerful application generation pipeline that simply takes in a natural language prompt and outputs a functioning application, handling all of the error correction and best practice adherence behind the scenes.

The following diagram illustrates this advanced workflow.

Conclusion

We are at the point in the adoption curve of generative AI that teams are able to get real productivity gains from using the variety of techniques and tools available. In the near future, it will be imperative to take advantage of these productivity gains to stay competitive. One thing we do know is that the landscape will continue to rapidly progress and change, so building a system tolerant of change and flexibility is key. Developing your components in a modular fashion allows for stability in the face of an ever-changing technical landscape while being ready to adopt the latest technology at each step of the way.

For more information about how to get started building with LLMs, see these resources:

- How Q4 Inc. used Amazon Bedrock, RAG, and SQLDatabaseChain to address numerical and structured dataset challenges building their Q&A chatbot

- Boosting RAG-based intelligent document assistants using entity extraction, SQL querying, and agents with Amazon Bedrock

- Create summaries of recordings using generative AI with Amazon Bedrock and Amazon Transcribe

About the Authors

Ian Lenora is an experienced software development leader who focuses on building high-quality cloud native software, and exploring the potential of artificial intelligence. He has successfully led teams in delivering complex projects across various industries, optimizing efficiency and scalability. With a strong understanding of the software development lifecycle and a passion for innovation, Ian seeks to leverage AI technologies to solve complex problems and create intelligent, adaptive software solutions that drive business value.

Ian Lenora is an experienced software development leader who focuses on building high-quality cloud native software, and exploring the potential of artificial intelligence. He has successfully led teams in delivering complex projects across various industries, optimizing efficiency and scalability. With a strong understanding of the software development lifecycle and a passion for innovation, Ian seeks to leverage AI technologies to solve complex problems and create intelligent, adaptive software solutions that drive business value.

Cody Collins is a New York-based Solutions Architect at Amazon Web Services, where he collaborates with ISV customers to build cutting-edge solutions in the cloud. He has extensive experience in delivering complex projects across diverse industries, optimizing for efficiency and scalability. Cody specializes in AI/ML technologies, enabling customers to develop ML capabilities and integrate AI into their cloud applications.

Cody Collins is a New York-based Solutions Architect at Amazon Web Services, where he collaborates with ISV customers to build cutting-edge solutions in the cloud. He has extensive experience in delivering complex projects across diverse industries, optimizing for efficiency and scalability. Cody specializes in AI/ML technologies, enabling customers to develop ML capabilities and integrate AI into their cloud applications.

Samit Kumbhani is an AWS Senior Solutions Architect in the New York City area with over 18 years of experience. He currently collaborates with Independent Software Vendors (ISVs) to build highly scalable, innovative, and secure cloud solutions. Outside of work, Samit enjoys playing cricket, traveling, and biking.

Samit Kumbhani is an AWS Senior Solutions Architect in the New York City area with over 18 years of experience. He currently collaborates with Independent Software Vendors (ISVs) to build highly scalable, innovative, and secure cloud solutions. Outside of work, Samit enjoys playing cricket, traveling, and biking.

Author: Ian Lenora